Network Automation Tutorial

David Barroso <dbarrosop@dravetech.com>How to navigate this tutorial?

Pressleft and right to change sections. Press up and down to move within sections. Press ? anytime for help.

- Network Systems Engineer at Fastly

- Previously:

- Network Engineer at Spotify

- Network Engineer at NTT

- Network & Systems Engineer at Atlas IT

- Creator of:

Agenda

- Lab preparation

- Hello world

- Abstract Vendor Interfaces

- Abstract Vendor Configurations

- Data-Driven Configuration

- Data-Driven Configuration with a Backend

- Abstractions

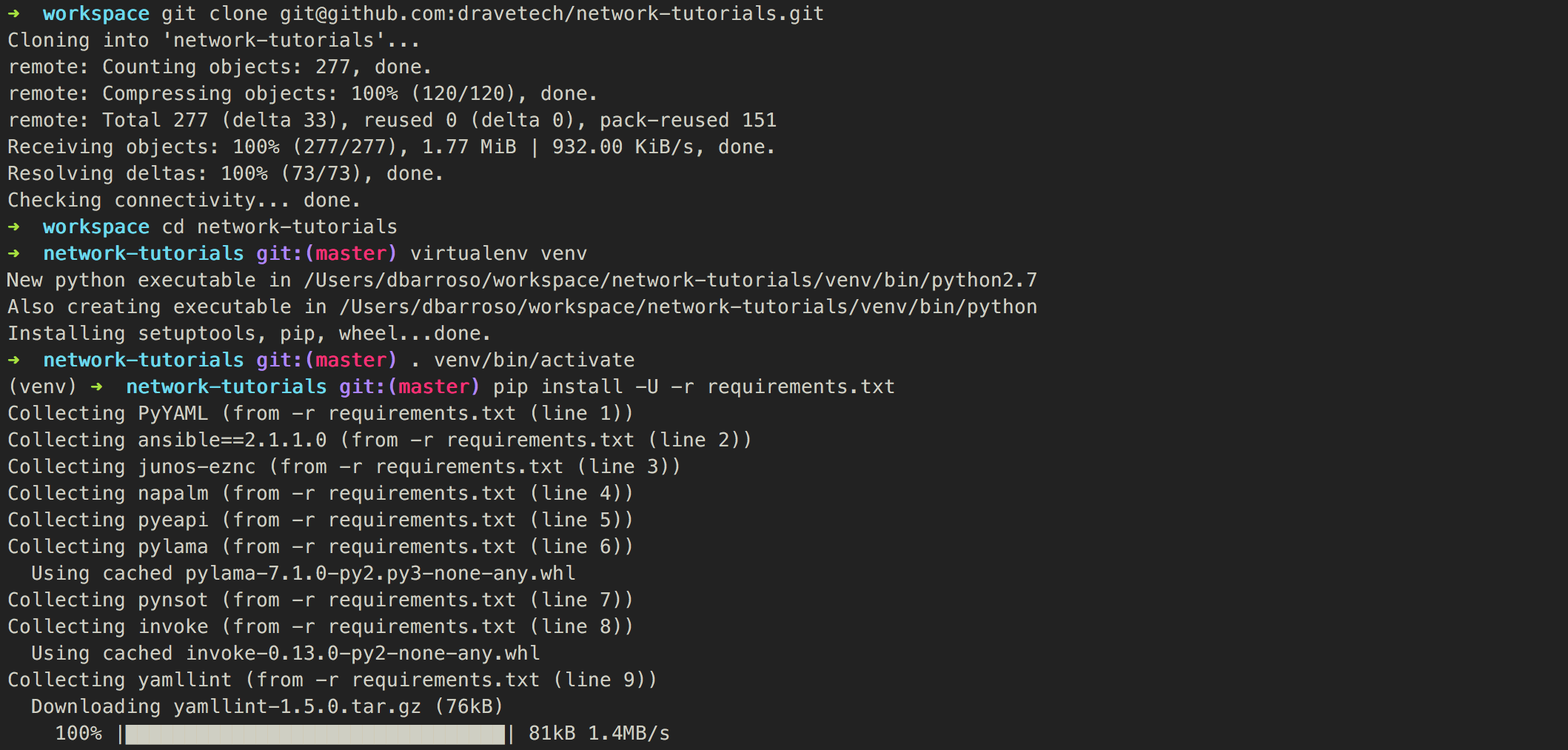

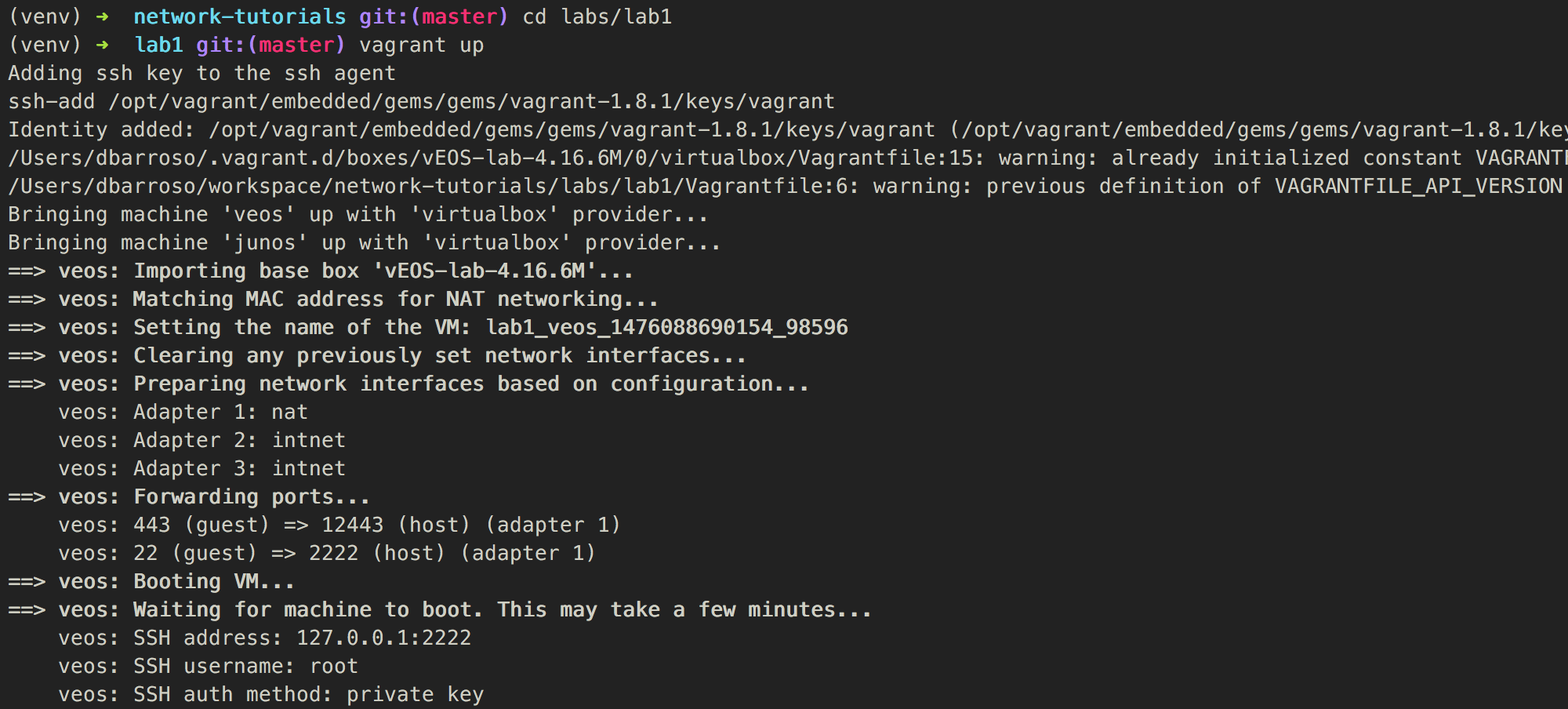

Lab preparation

Link to the code on githubObjectives

- Install all of the python requirements

- Start the devices on your lab

Installing python requirements

Starting the lab

1 - hello world

Link to the code on githubObjectives

Learn how to connect to different devices, gather data such as the serial number or the device model and how to manipulate the device configuration using their respective APIs.

JunOS - Retrieving facts

>>> from jnpr.junos import Device

>>> import pprint

>>> pp = pprint.PrettyPrinter(indent=4)

>>>

>>> junos = Device(host='127.0.0.1', user='vagrant', port=12203)

>>> junos.open()

Device(127.0.0.1)

>>> junos_facts = junos.facts

>>> pp.pprint(junos_facts)

{ '2RE': False,

'HOME': '/cf/var/home/vagrant',

'RE0': { 'last_reboot_reason': 'Router rebooted after a normal shutdown.',

'model': 'FIREFLY-PERIMETER RE',

'status': 'Testing',

'up_time': '3 minutes, 36 seconds'},

'domain': None,

'fqdn': 'vsrx',

'hostname': 'vsrx',

'model': 'FIREFLY-PERIMETER',

'personality': 'SRX_BRANCH',

'serialnumber': '5b2b599a283b',

'srx_cluster': False,

'version': '12.1X47-D20.7',

'version_info': junos.version_info(major=(12, 1), type=X, minor=(47, 'D', 20), build=7),

'virtual': True}

>>> junos.close()

EOS - Retrieving facts

>>> import pyeapi

>>> import pprint

>>> pp = pprint.PrettyPrinter(indent=4)

>>> connection = pyeapi.client.connect(transport='https', host='127.0.0.1', username='vagrant',

... password='vagrant', port=12443,)

>>> eos = pyeapi.client.Node(connection)

>>> eos_facts = eos.run_commands(['show version'])

>>> pp.pprint(eos_facts)

[ { u'architecture': u'i386',

u'bootupTimestamp': 1475859369.15,

u'hardwareRevision': u'',

u'internalBuildId': u'e796e94c-ba3b-4355-afcf-ef0abfbfaee3',

u'internalVersion': u'4.16.6M-3205780.4166M',

u'isIntlVersion': False,

u'memFree': 56204,

u'memTotal': 1897596,

u'modelName': u'vEOS',

u'serialNumber': u'',

u'systemMacAddress': u'08:00:27:52:27:ce',

u'version': u'4.16.6M'}]

JunOS - Changing the hostname

>>> from jnpr.junos import Device

>>> from jnpr.junos.utils.config import Config

>>>

>>> junos = Device(host='127.0.0.1', user='vagrant', port=12203)

>>> junos.open()

Device(127.0.0.1)

>>>

>>> print(junos.facts['hostname'])

vsrx

>>> junos.bind(cu=Config)

>>> junos.cu.lock()

True

>>> junos.cu.load("system {host-name new-hostname;}", format="text", merge=True)

>>> junos.cu.commit()

True

>>> junos.cu.unlock()

True

>>> junos.facts_refresh()

>>> print(junos.facts['hostname'])

new-hostname

>>> junos.close()

EOS - Changing the hostname

>>> import pyeapi

>>> connection = pyeapi.client.connect(

... transport='https',

... host='127.0.0.1',

... username='vagrant',

... password='vagrant',

... port=12443,

... )

>>> eos = pyeapi.client.Node(connection)

>>> print(eos.run_commands(['show hostname'])[0]['hostname'])

localhost

>>> eos.run_commands(['configure', 'hostname a-new-hostname'])

[{}, {}]

>>> print(eos.run_commands(['show hostname'])[0]['hostname'])

a-new-hostname

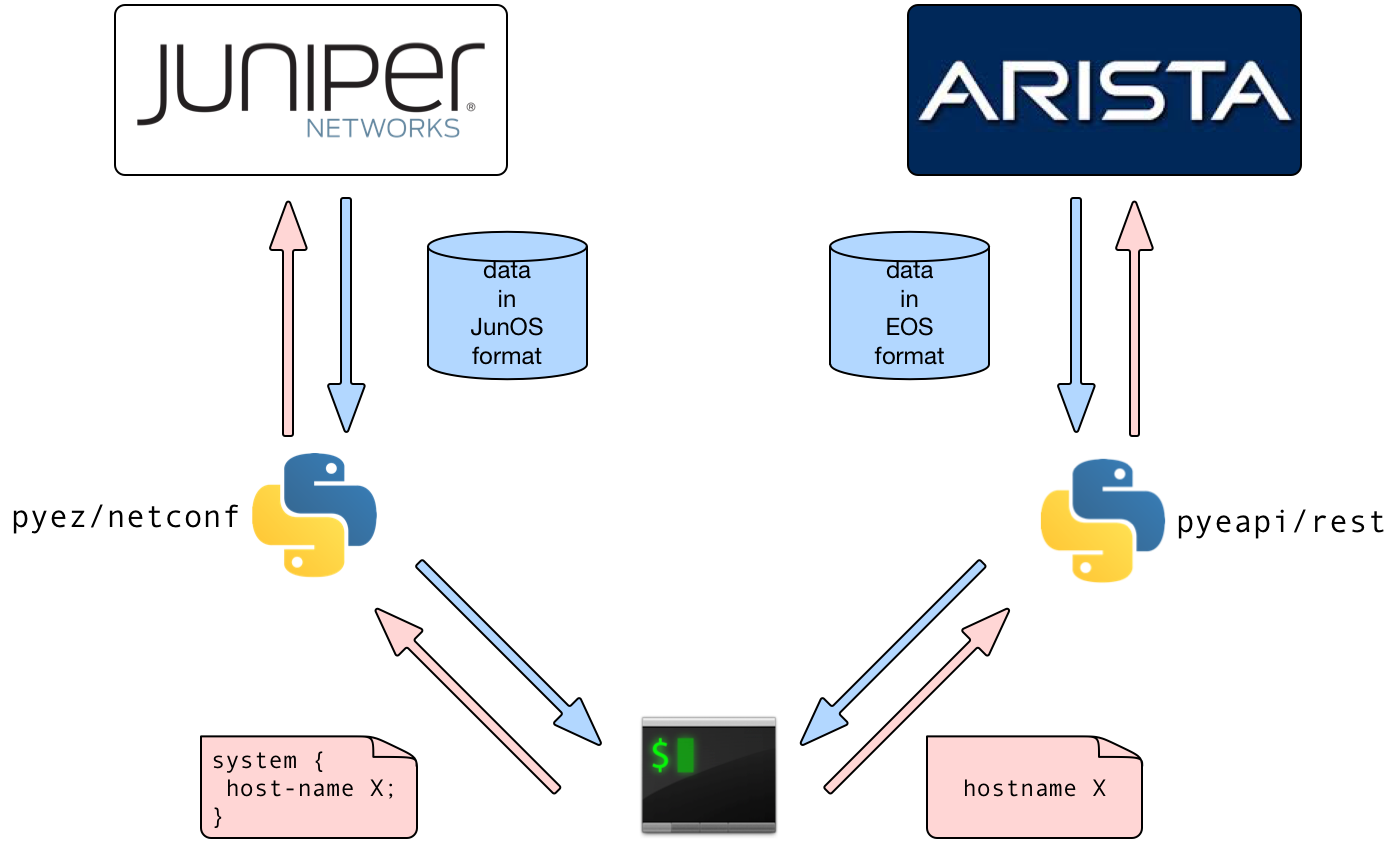

Summary

- Different devices have different APIs

- Different APIs behave differently so code is different

- Similar methods may return similar but different data structures and with different formats

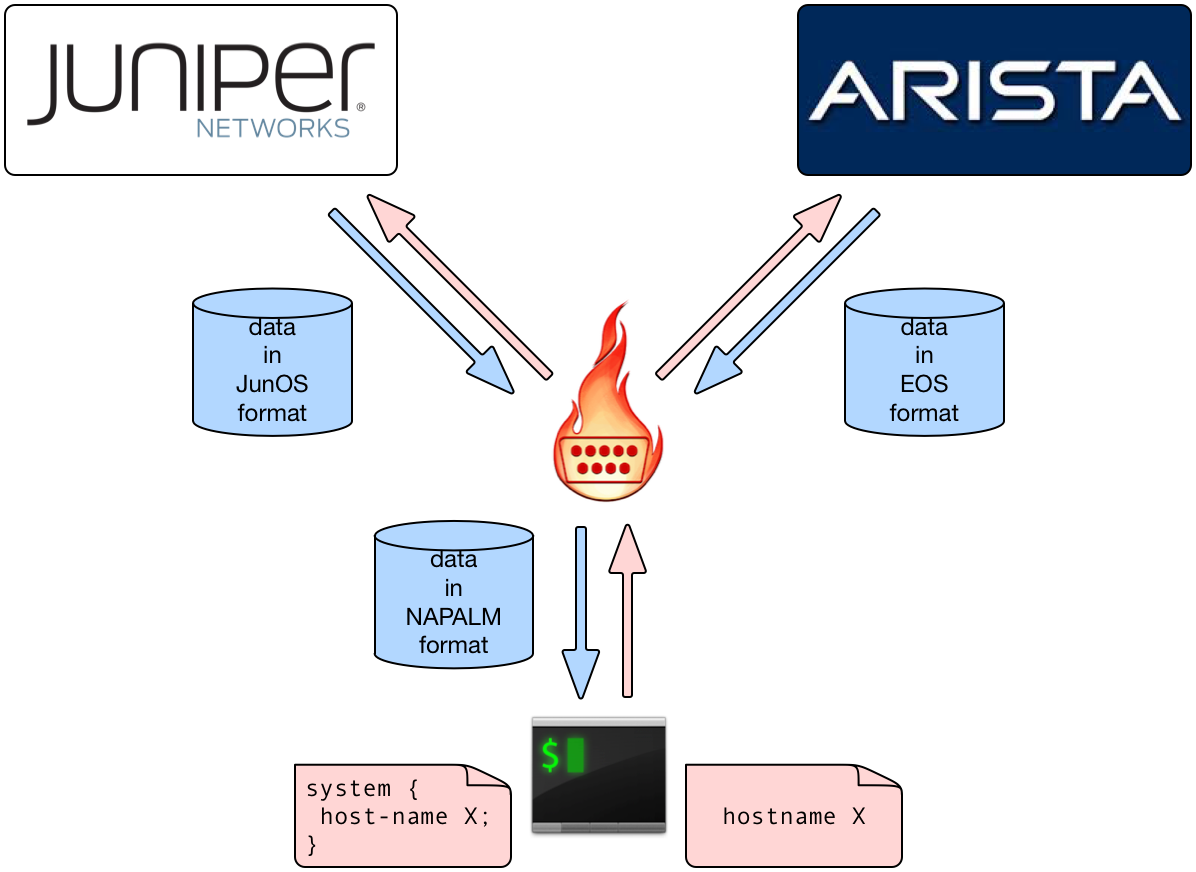

2 - Abstract vendor interfaces

Link to the code on githubObjectives

Learn how to use napalm to abstract vendor's APIs. The code we will produce in this section will have the same functionality as in the previous section, however, it will work across different vendors.

NAPALM - Retrieving facts

>>> from napalm_base import get_network_driver

>>> import pprint

>>> pp = pprint.PrettyPrinter(indent=4)

>>>

>>> junos_driver = get_network_driver('junos')

>>> eos_driver = get_network_driver('eos')

>>>

>>> junos_configuration = {

... 'hostname': '127.0.0.1',

... 'username': 'vagrant',

... 'password': '',

... 'optional_args': {'port': 12203}

... }

>>>

>>> eos_configuration = {

... 'hostname': '127.0.0.1',

... 'username': 'vagrant',

... 'password': 'vagrant',

... 'optional_args': {'port': 12443}

... }

>>>

>>> with junos_driver(**junos_configuration) as junos:

... pp.pprint(junos.get_facts())

...

{ 'fqdn': u'new-hostname',

'hostname': u'new-hostname',

'interface_list': [ 'ge-0/0/0', 'gr-0/0/0',

'lt-0/0/0', 'mt-0/0/0', 'vlan'],

'model': u'FIREFLY-PERIMETER',

'os_version': u'12.1X47-D20.7',

'serial_number': u'5b2b599a283b',

'uptime': 1080,

'vendor': u'Juniper'}

>>>

>>> with eos_driver(**eos_configuration) as eos:

... pp.pprint(eos.get_facts())

...

{ 'fqdn': u'a-new-hostname',

'hostname': u'a-new-hostname',

'interface_list': [u'Ethernet1', u'Ethernet2',

u'Management1'],

'model': u'vEOS',

'os_version': u'4.16.6M-3205780.4166M',

'serial_number': u'',

'uptime': 1217,

'vendor': u'Arista'}

NAPALM - Changing the hostname

>>> from napalm_base import get_network_driver

>>> junos_driver = get_network_driver('junos')

>>> eos_driver = get_network_driver('eos')

>>>

>>> junos_configuration = {

... 'hostname': '127.0.0.1',

... 'username': 'vagrant',

... 'password': '',

... 'optional_args': {'port': 12203}

... }

>>>

>>> eos_configuration = {

... 'hostname': '127.0.0.1',

... 'username': 'vagrant',

... 'password': 'vagrant',

... 'optional_args': {'port': 12443}

... }

>>>

>>> def change_configuration(device, configuration):

... device.load_merge_candidate(config=configuration)

... print(device.compare_config())

... device.commit_config()

...

>>>

NAPALM - Changing the hostname (cont'd)

>>> with junos_driver(**junos_configuration) as junos:

... change_configuration(

... junos,

... "system {host-name yet-another-hostname;}"

... )

...

[edit system]

- host-name new-hostname;

+ host-name yet-another-hostname;

>>>

>>> with junos_driver(**junos_configuration) as junos:

... change_configuration(

... junos,

... "system {host-name yet-another-hostname;}"

... )

...

>>>

>>> with eos_driver(**eos_configuration) as eos:

... change_configuration(

... eos,

... 'hostname yet-another-hostname'

... )

...

@@ -8,7 +8,7 @@

!

transceiver qsfp default-mode 4x10G

!

-hostname a-new-hostname

+hostname yet-another-hostname

!

spanning-tree mode mstp

!

>>>

>>> with eos_driver(**eos_configuration) as eos:

... change_configuration(

... eos,

... 'hostname yet-another-hostname'

... )

...

>>>

Summary

- NAPALM allows you to write code that works across vendors; workflows and data retrieval are unified across platforms

- Configuration syntax is still vendor dependent (YANG support is under progress)

- Configuration changes are idempotent

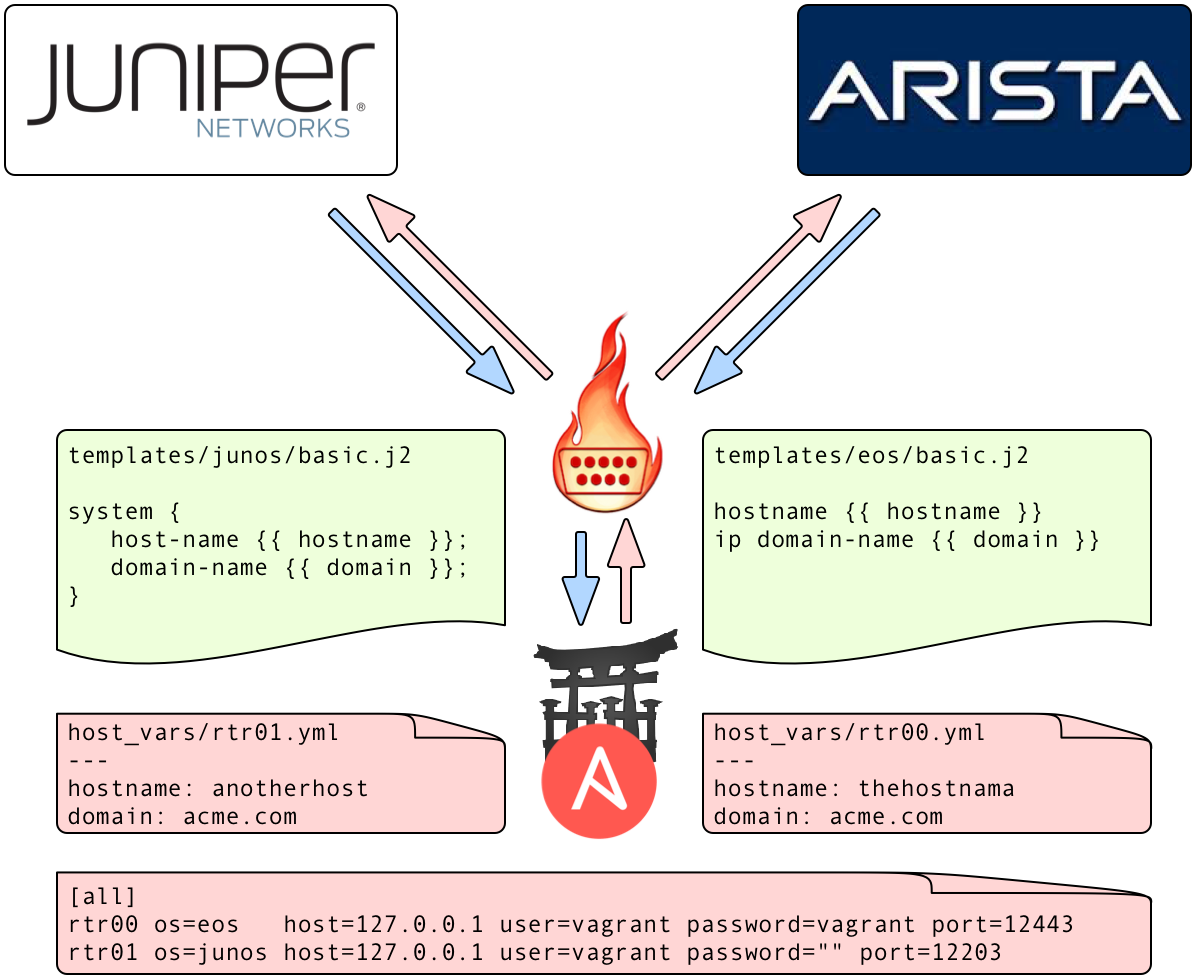

3 - Abstract vendor configurations

Link to the code on githubObjectives

Learn how to useansible and jinja2 templates to abstract configuration syntax and focus on the parameters to change

Ansible - Getting information - Playbook

---

- name: "Get facts"

hosts: all

connection: local

gather_facts: no

vars:

tasks:

- name: "get facts from device"

napalm_get_facts:

hostname: "{{ host }}"

username: "{{ user }}"

dev_os: "{{ os }}"

password: "{{ password }}"

optional_args:

port: "{{ port }}"

filter: ['facts']

register: napalm_facts

- name: Facts

debug:

msg: "{{ napalm_facts.ansible_facts.facts|to_nice_json }}"

tags: [print_action]

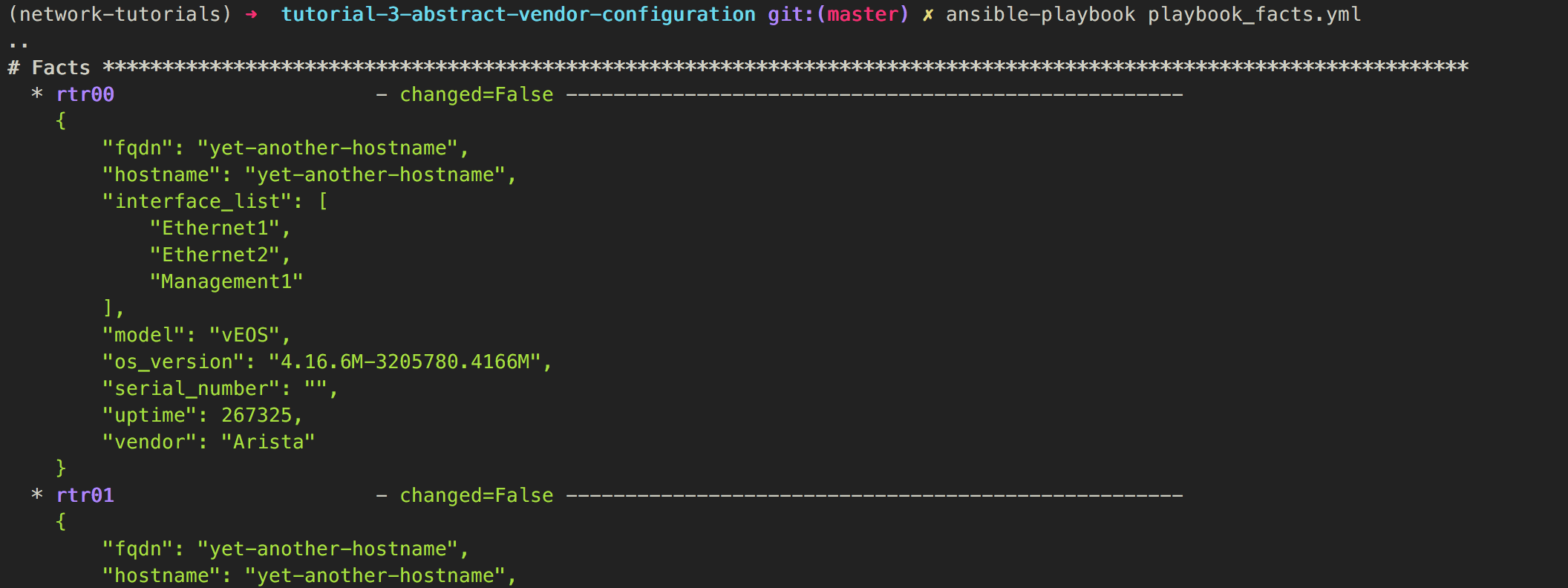

Ansible - Getting information - Run (1)

Ansible - Getting information - Run (2)

Ansible - Changing config - data

➜ cat hosts

[all]

rtr00 os=eos host=127.0.0.1 user=vagrant password=vagrant port=12443

rtr01 os=junos host=127.0.0.1 user=vagrant password="" port=12203

➜ cat group_vars/all.yml

---

ansible_python_interpreter: "/usr/bin/env python"

domain: acme.com

➜ cat host_vars/rtr00.yml

---

hostname: thehostnama

➜ cat host_vars/rtr01.yml

---

hostname: another-host

Ansible - Changing config - Templates

➜ cat templates/eos/simple.j2

hostname {{ hostname }}

ip domain-name {{ domain }}

➜ cat templates/junos/simple.j2

system {

host-name {{ hostname}};

domain-name {{ domain }};

}

Ansible - Changing config - Playbook

---

tasks:

- name: A simple template with some configuration

template:

src: "{{ os }}/simple.j2"

dest: "{{ host_dir }}/simple.conf"

changed_when: False

when: commit_changes == 0

- name: Load configuration into the device

napalm_install_config:

hostname: "{{ host }}"

username: "{{ user }}"

dev_os: "{{ os }}"

password: "{{ password }}"

optional_args:

port: "{{ port }}"

config_file: "{{ host_dir }}/simple.conf"

commit_changes: "{{ commit_changes }}"

replace_config: false

get_diffs: True

diff_file: "{{ host_dir }}/diff"

tags: [print_action]

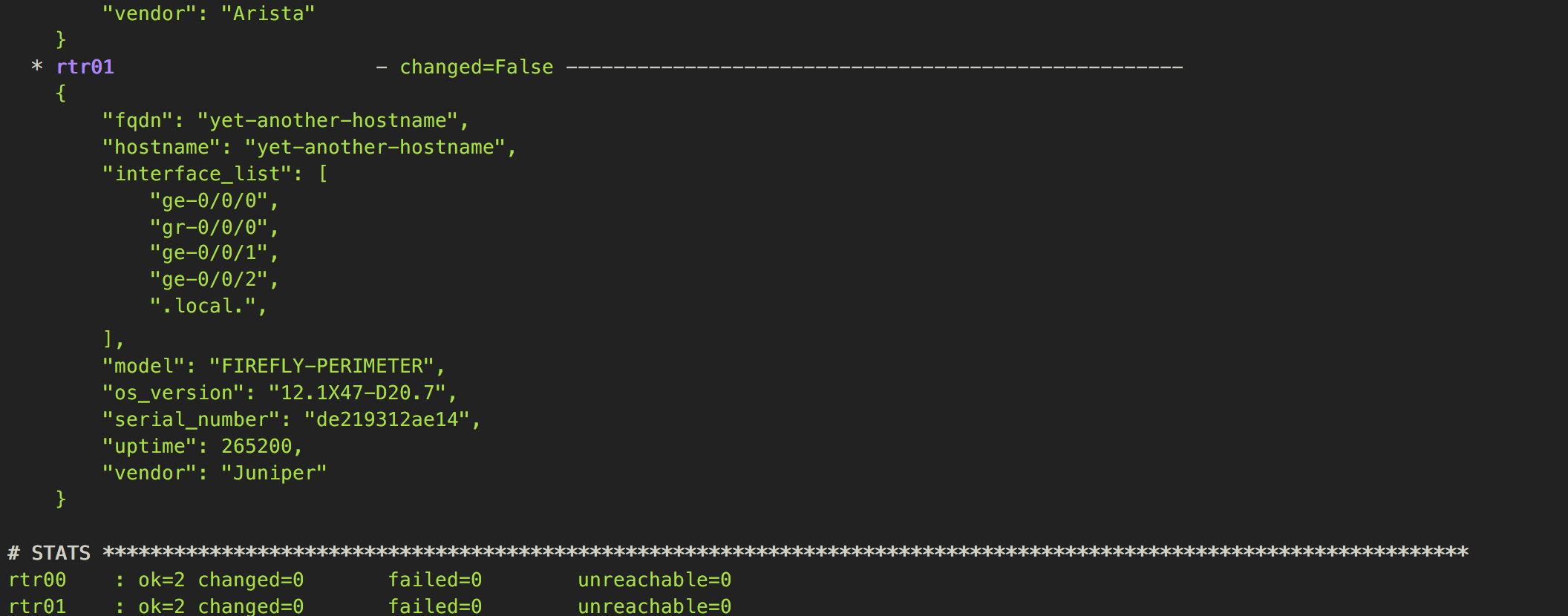

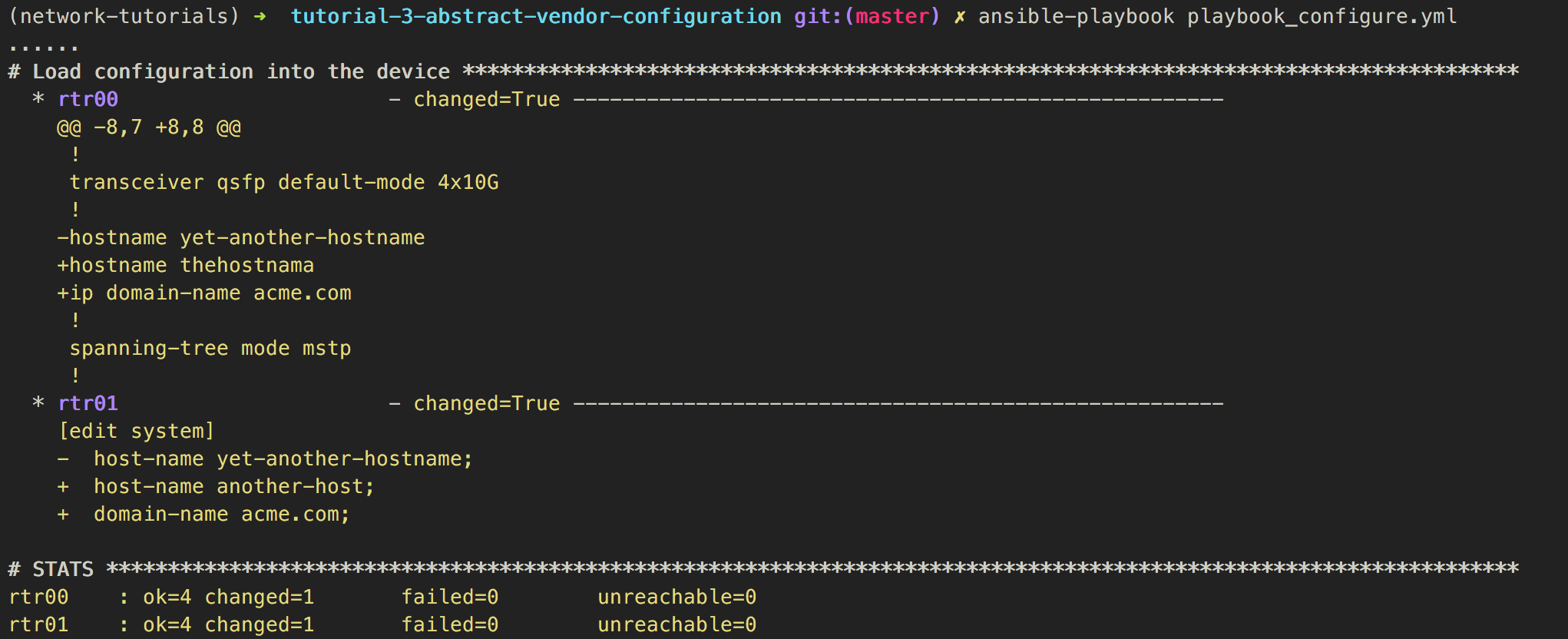

Ansible - Changing config - Dry-run

Ansible - Changing config - Commit

Ansible - Changing config - Commit (2)

Changes are idempotent

Changes are idempotent

Summary

- We used ansible as our framework to build our configuration management system

- We stored variables in

YAML - We used

jinja2templates to translate variables into device configuration - We leveraged on

NAPALMto seamlessly deploy the resulting configuration and to gather information

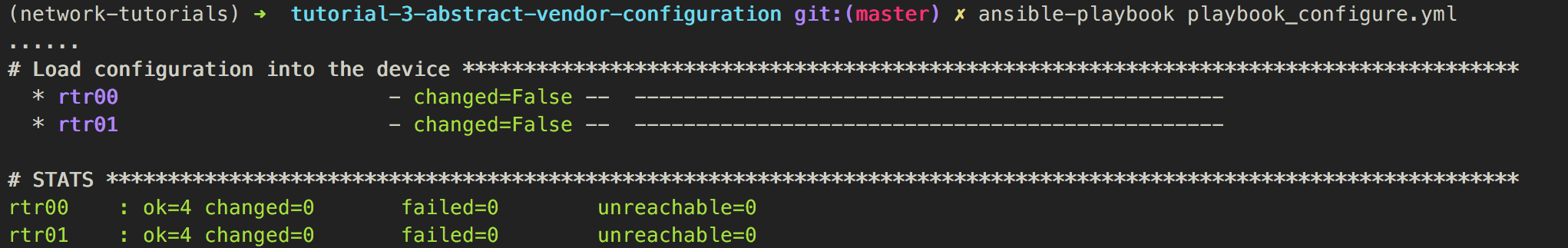

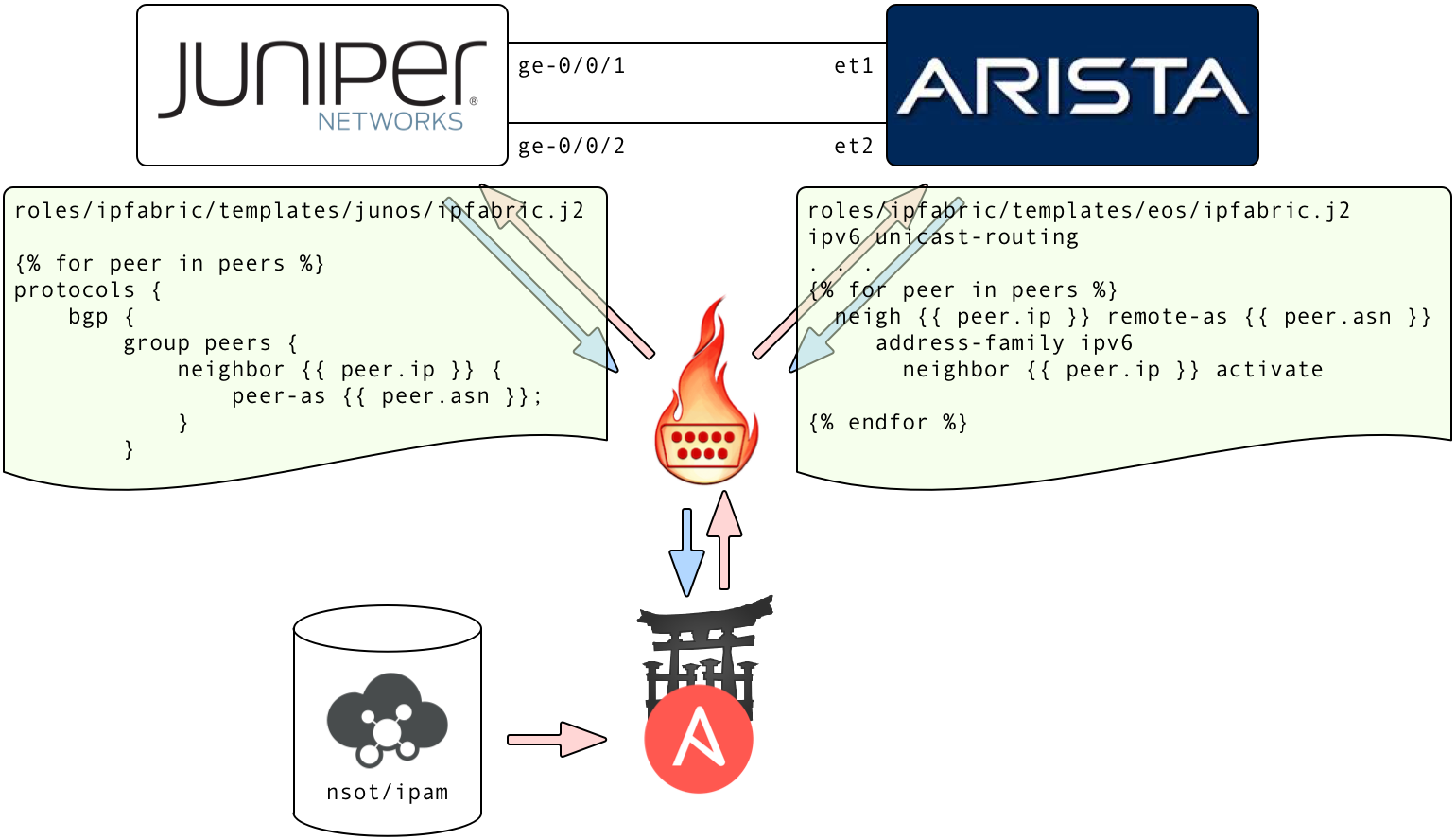

4 - data-driven configuration

Link to the code on githubObjectives

We are going to leverage on what we have learnt so far to build an IP fabric using vendor-agnostic data to drive the configuration.

D-D - Changing config - data

➜ cat host_vars/rtr00.yml

---

hostname: rtr00

asn: 65000

router_id: "1.1.1.100"

interfaces:

- name: "lo0"

ip_address: "2001:db8:b33f::100/128"

- name: "et1"

ip_address: "2001:db8:caf3:1::/127"

- name: "et2"

ip_address: "2001:db8:caf3:2::/127"

peers:

- ip: "2001:db8:caf3:1::1"

asn: 65001

- ip: "2001:db8:caf3:2::1"

asn: 65001

➜ cat host_vars/rtr01.yml

---

hostname: rtr01

asn: 65001

router_id: "1.1.1.101"

interfaces:

- name: "lo0"

ip_address: "2001:db8:b33f::101/128"

- name: "ge-0/0/1"

ip_address: "2001:db8:caf3:1::1/127"

- name: "ge-0/0/2"

ip_address: "2001:db8:caf3:2::1/127"

peers:

- ip: "2001:db8:caf3:1::"

asn: 65000

- ip: "2001:db8:caf3:2::"

asn: 65000

➜ cat hosts

[all]

rtr00 os=eos host=127.0.0.1 user=vagrant password=vagrant port=12443

rtr01 os=junos host=127.0.0.1 user=vagrant password="" port=12203

D-D Changing config - Templates

➜ cat (...)/templates/eos/ipfabric.j2

ipv6 unicast-routing

{% for interface in interfaces %}

default interface {{ interface.name }}

interface {{ interface.name }}

{{ 'no switchport' if not interface.name.startswith("lo") else "" }}

ipv6 address {{ interface.ip_address }}

{% endfor %}

route-map EXPORT-LO0 permit 10

match interface Loopback0

no router bgp

router bgp {{ asn }}

router-id {{ router_id }}

redistribute connected route-map EXPORT-LO0

{% for peer in peers %}

neighbor {{ peer.ip }} remote-as {{ peer.asn }}

address-family ipv6

neighbor {{ peer.ip }} activate

{% endfor %}

➜ cat (...)/templates/junos/ipfabric.j2

{% for interface in interfaces %}

interfaces {

replace:

{{ interface.name }} {

unit 0 {

family inet6 {

address {{ interface.ip_address }};

}

}

}

}

{% endfor %}

routing-options {

router-id {{ router_id }};

autonomous-system {{ asn }};

}

policy-options {

policy-statement EXPORT_LO0 {

from interface lo0.0;

then accept;

}

policy-statement PERMIT_ALL {

from protocol bgp;

then accept;

}

}

protocols {

replace:

bgp {

import PERMIT_ALL;

export [ EXPORT_LO0 PERMIT_ALL ];

}

}

{% for peer in peers %}

protocols {

bgp {

group peers {

neighbor {{ peer.ip }} {

peer-as {{ peer.asn }};

}

}

}

}

{% endfor %}

D-D Changing config - Playbook

...

- name: Basic Configuration

hosts: all

connection: local

roles:

- base

- name: Fabric Configuration

hosts: all

connection: local

roles:

- ipfabric

...

➜ tree roles

├── base

│ ├── tasks

│ │ └── main.yml

│ └── templates

│ ├── eos

│ │ └── simple.j2

│ └── junos

│ └── simple.j2

└── ipfabric

├── tasks

│ └── main.yml

└── templates

├── eos

│ └── ipfabric.j2

└── junos

└── ipfabric.j2

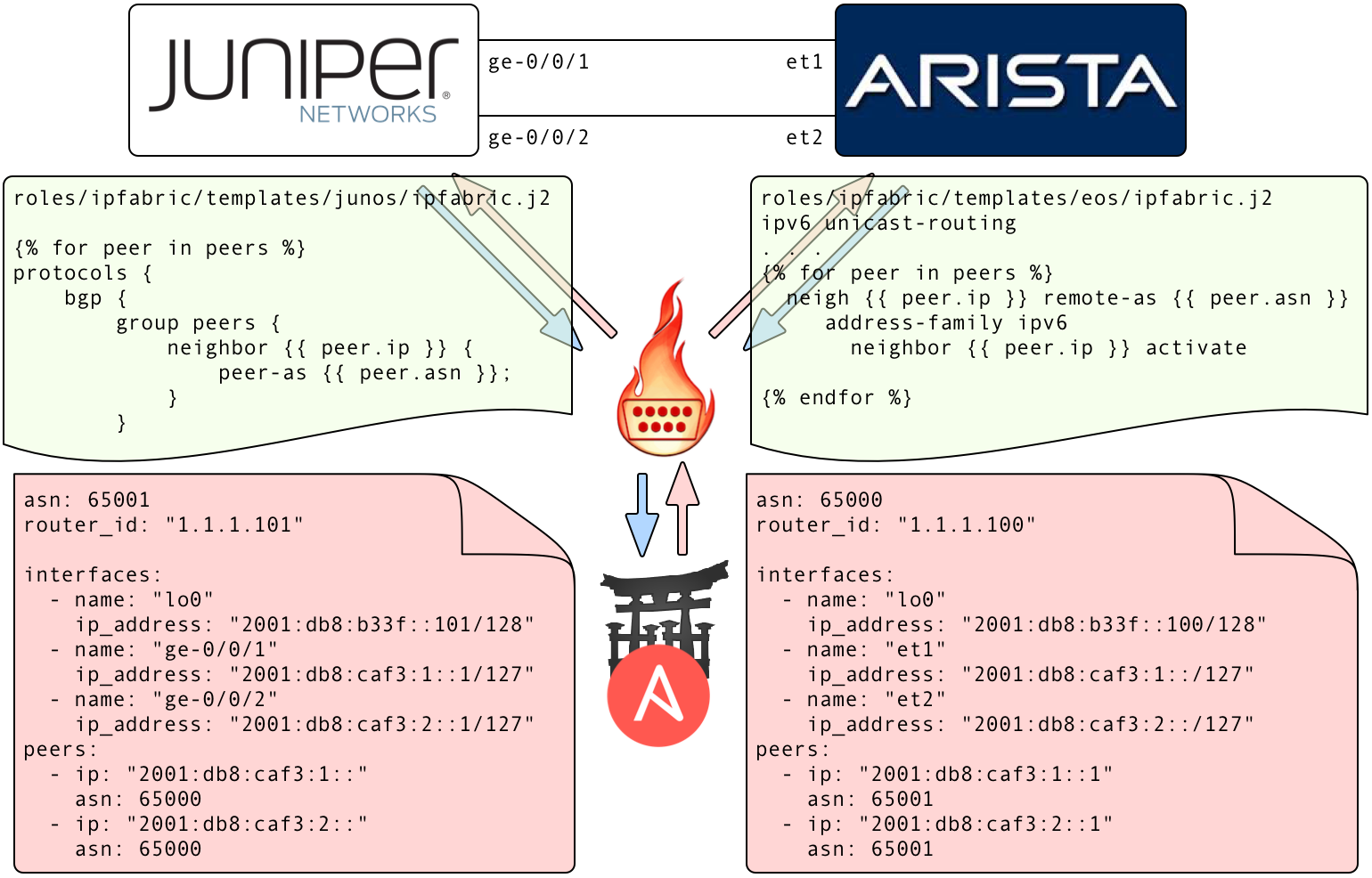

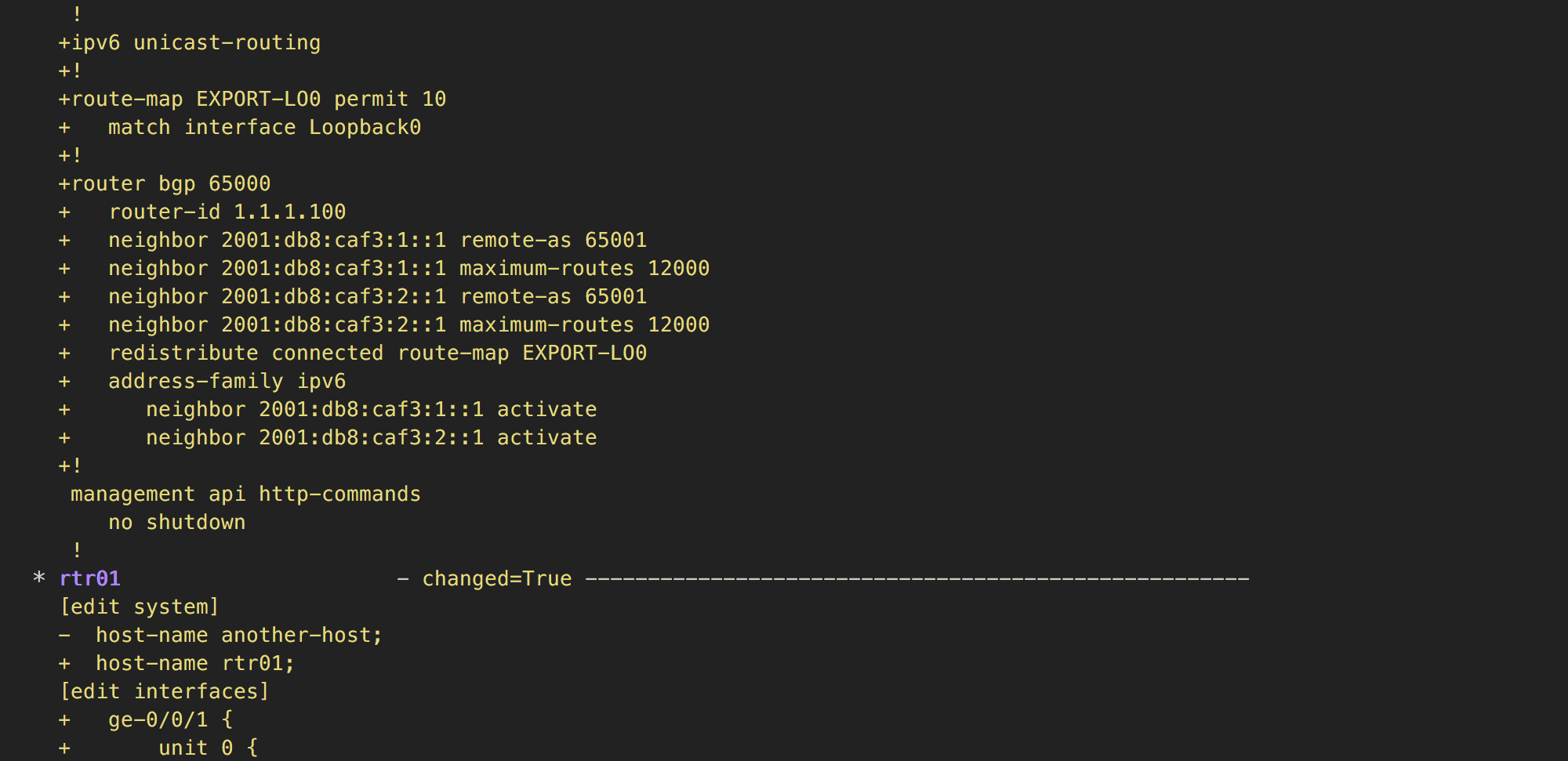

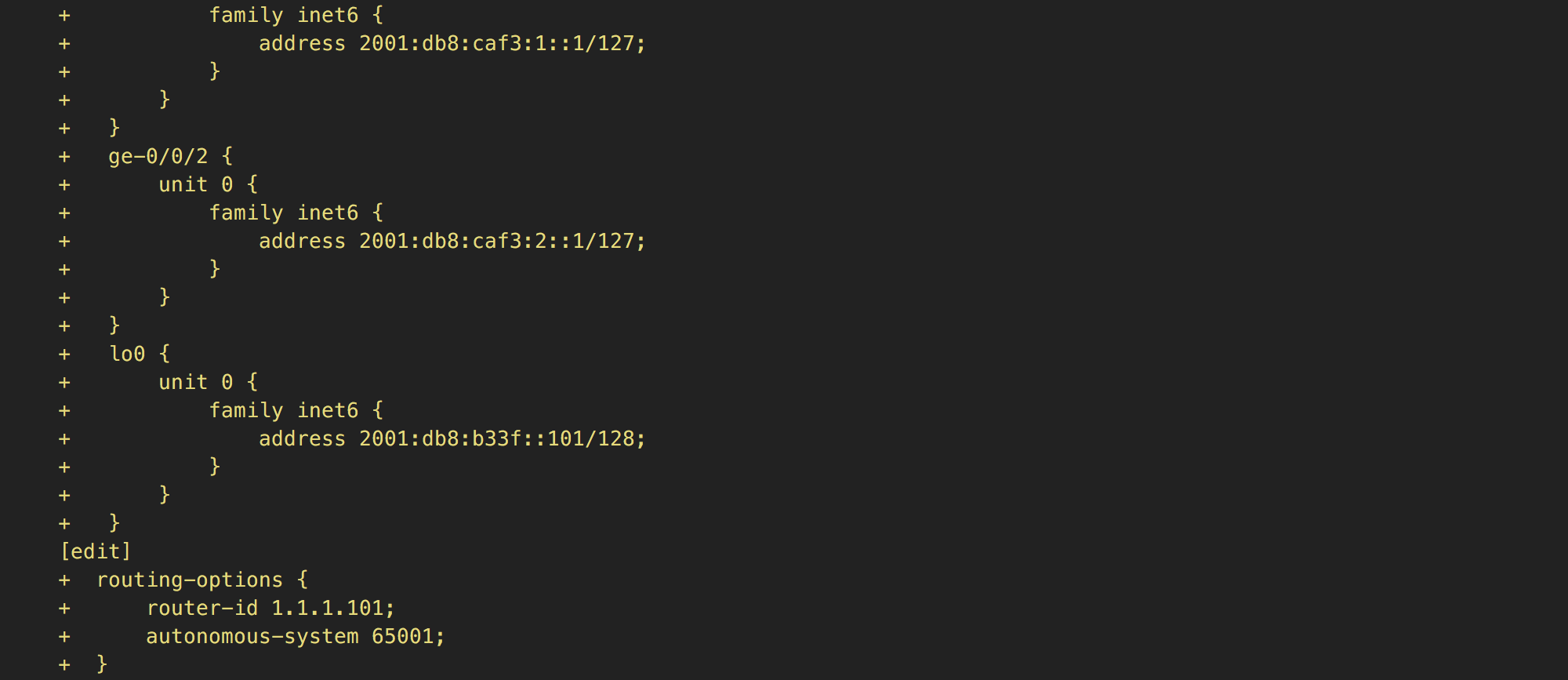

D-D Changing config - Run (1)

D-D Changing config - Run (2)

D-D Changing config - Run (3)

D-D Changing config - Run (4)

D-D Verifying BGP state - Playbook

- name: "get facts from device"

napalm_get_facts:

hostname: "{{ host }}"

username: "{{ user }}"

dev_os: "{{ os }}"

password: "{{ password }}"

optional_args:

port: "{{ port }}"

filter: ['bgp_neighbors']

register: napalm_facts

- name: "Check BGP sessions are healthy"

assert:

that:

- item.value.is_up

msg: "{{ item.key }} is down"

with_dict: "{{ napalm_facts.ansible_facts.bgp_neighbors.global.peers }}"

- name: "Check BGP sessions are receiving prefixes"

assert:

that:

- item.value.address_family.ipv6.received_prefixes > 0

msg: "{{ item.key }} is not receiving any prefixes"

with_dict: "{{ napalm_facts.ansible_facts.bgp_neighbors.global.peers }}"

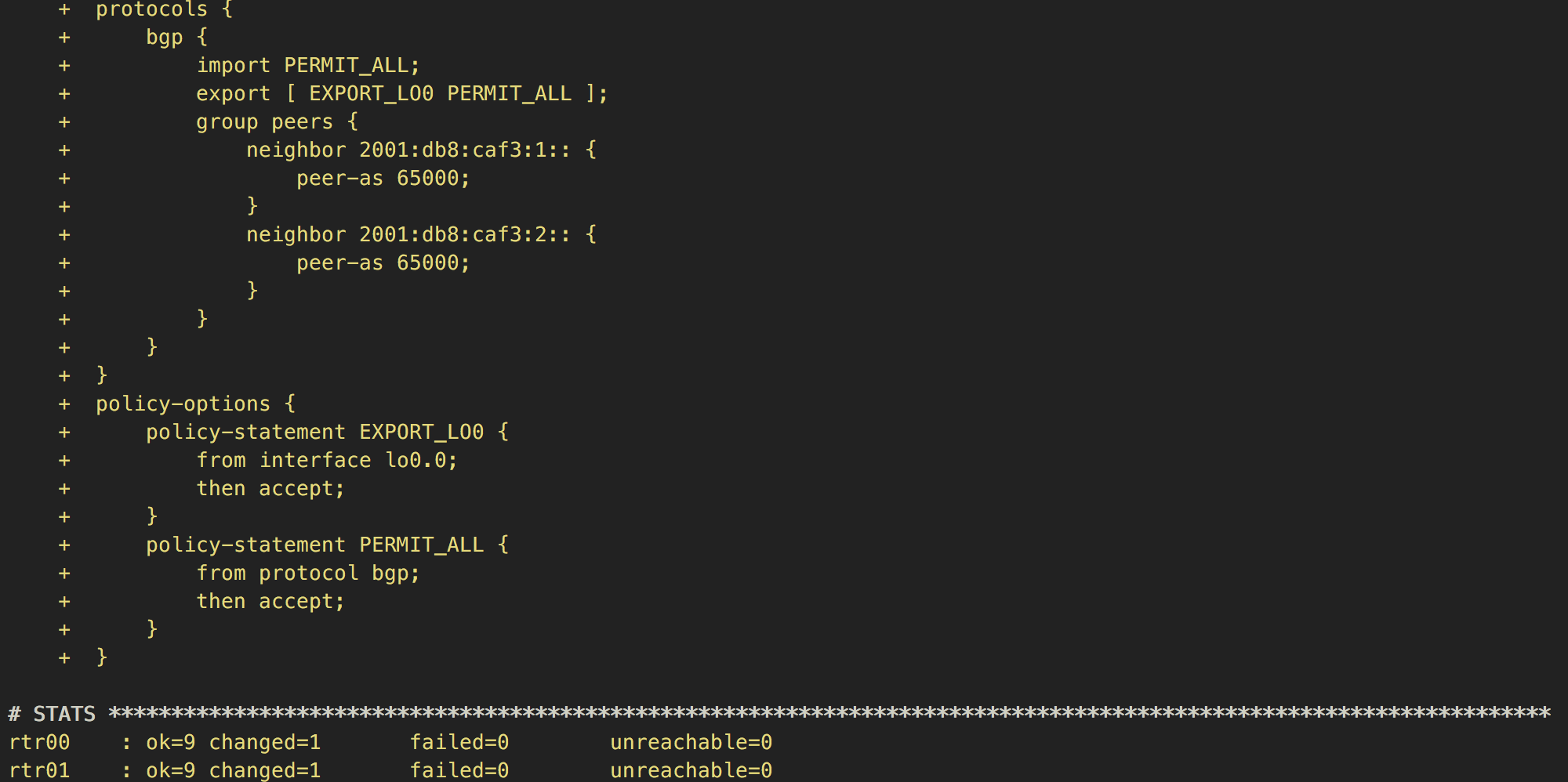

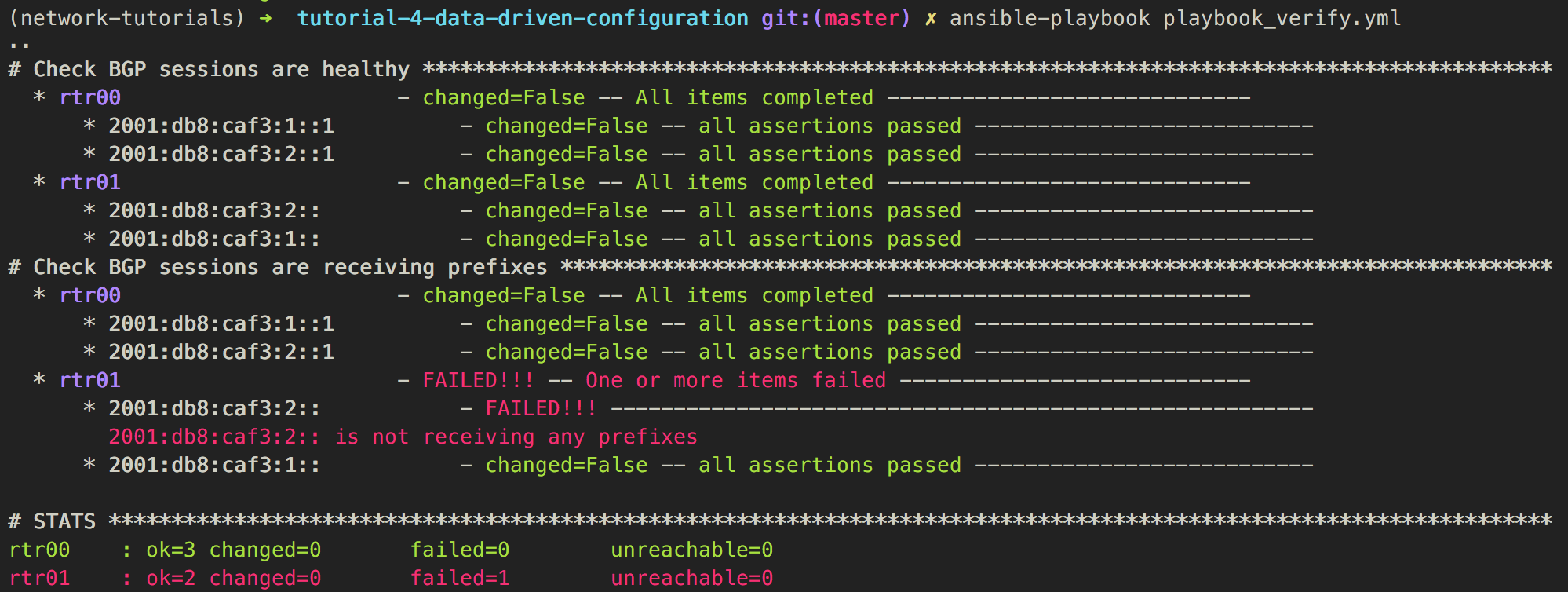

D-D Verifying BGP state - Run (1)

D-D Verifying BGP state - Run (2)

Maybe a faulty policy or missing communities?

Maybe a faulty policy or missing communities?

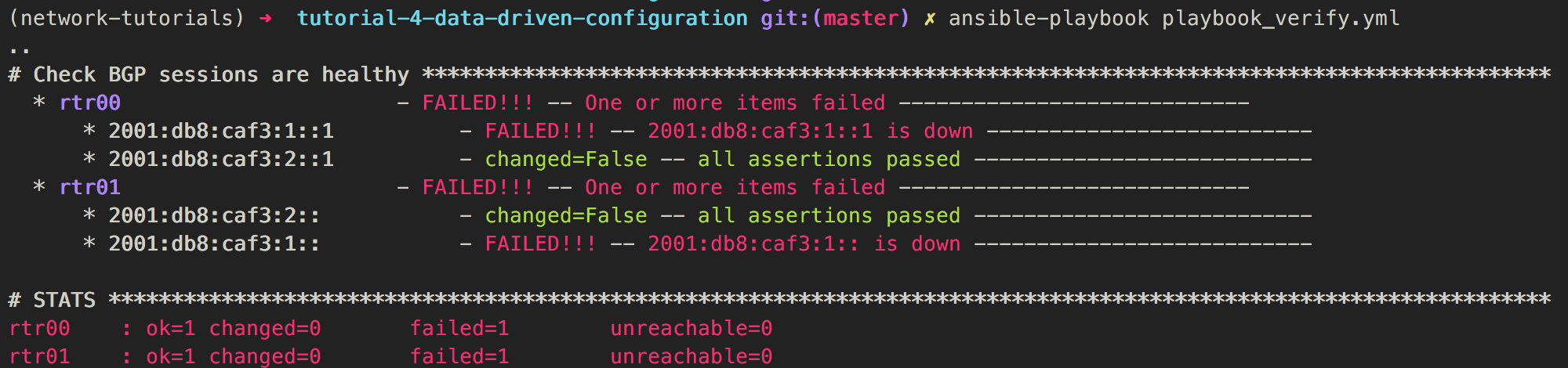

D-D Verifying BGP state - Run (3)

Most probably a faulty link

Most probably a faulty link

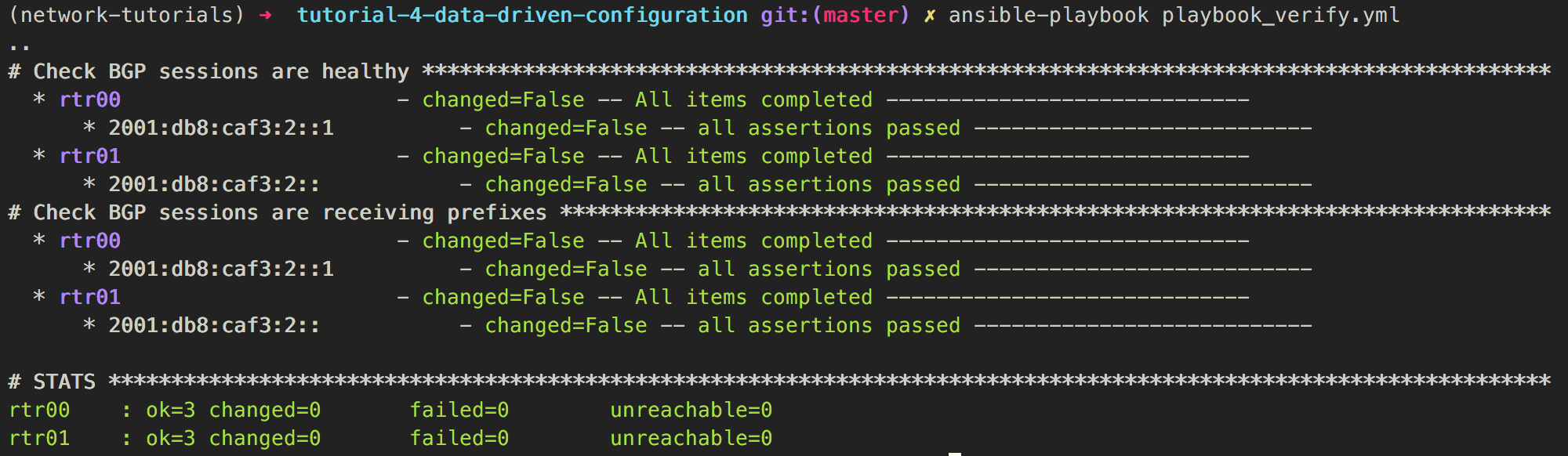

D-D Verifying BGP state - Run (4)

Looks fine but it is not, there is a link with no BGP session configured

Looks fine but it is not, there is a link with no BGP session configured

Summary

- We leveraged on what we learnt so far to build something more complex

- Storing data in static files makes them hard to manipulate but it's great for mocking new services or for data that doesn't change often or doesn't need to be queried

- We verified state of the network using the network as source of truth rather than using our knowledge about the network

5 - Data Driven Configuration with a backend

Link to the code on githubObjectives

Similar to the previous scenario, however, this time we will use a backend to store and manipulate the data.

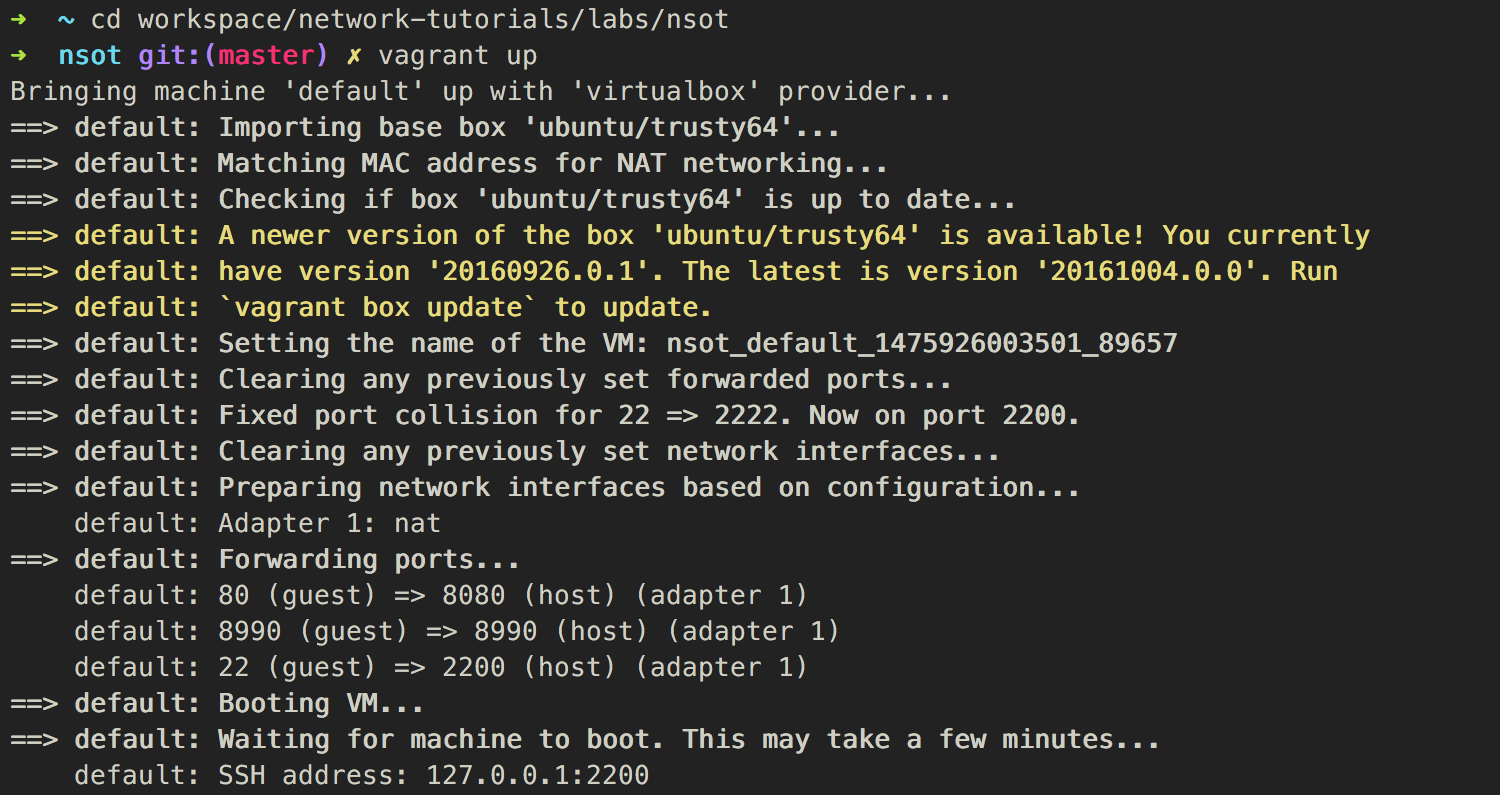

DDB - Before we begin - Start nsot VM

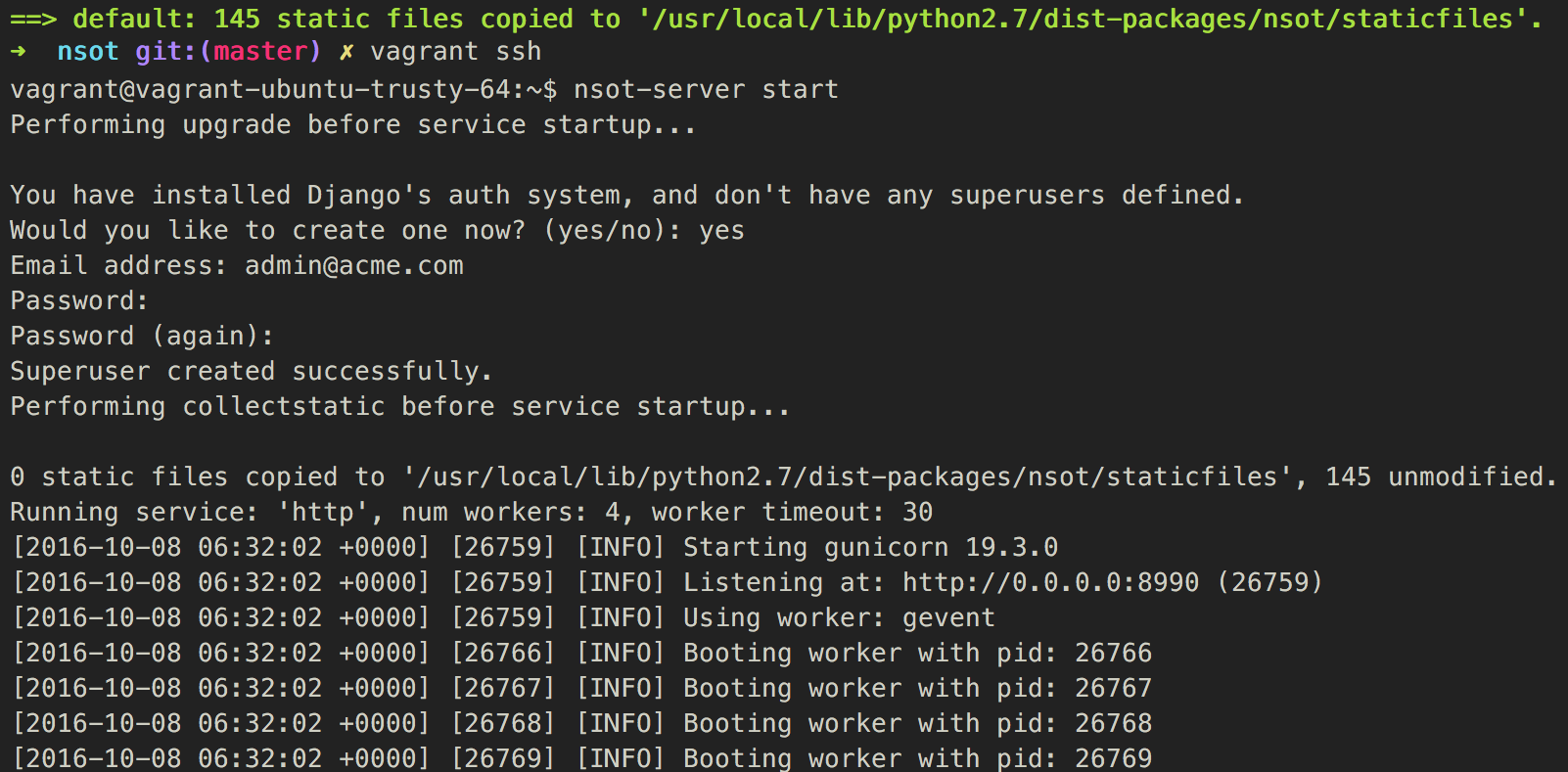

DDB - Before we begin - Start nsot

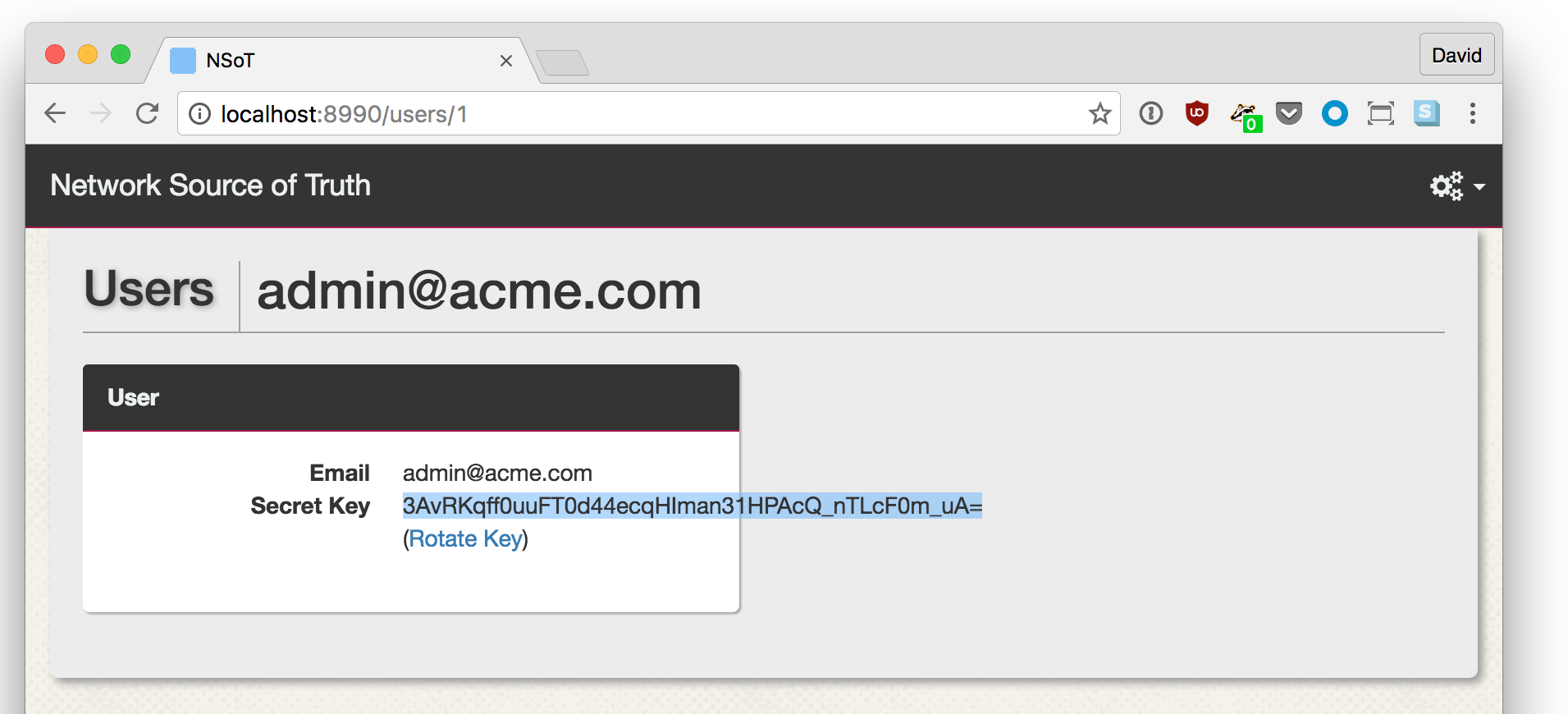

DDB - Before we begin - Gather nsot token

DDB - Before we begin - nsot client conf

~/.pynsotrc

[pynsot]

url = http://localhost:8990/api

secret_key = 3AvRKqff0uuFT0d44ecqHIman31HPAcQ_nTLcF0m_uA= # Token

auth_method = auth_token

email = admin@acme.com

Make sure you replace the secret_key with the token you gathered in the previous step.

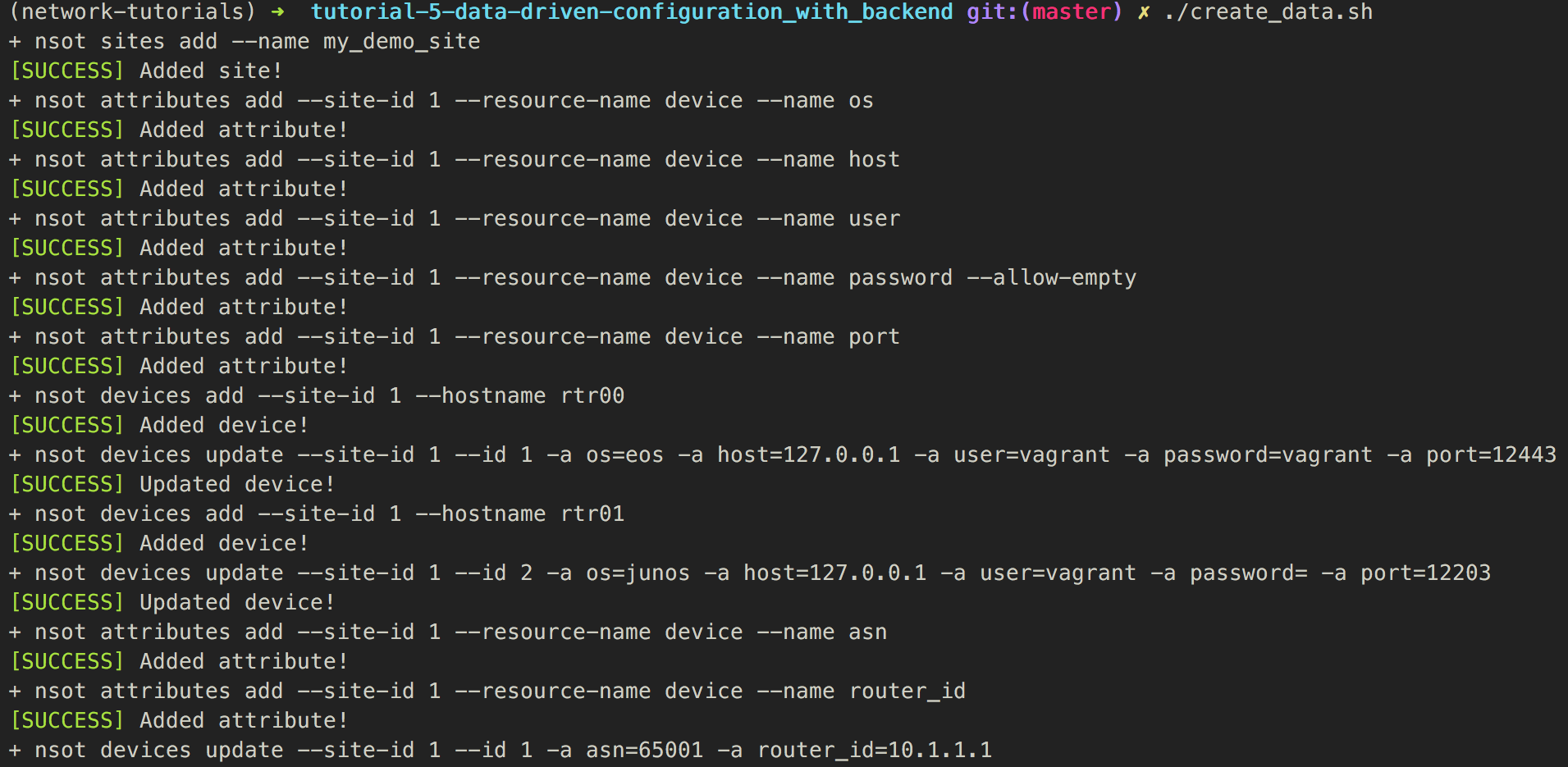

DDB - Before we begin - Create the data

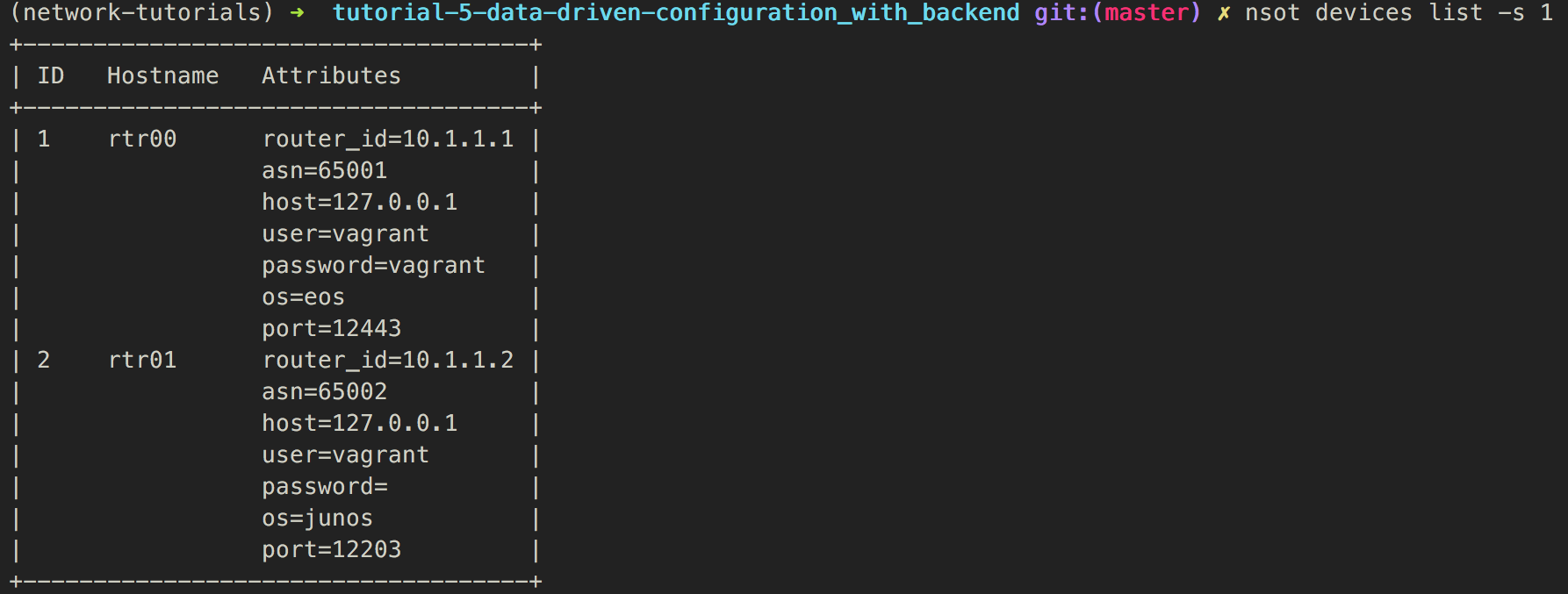

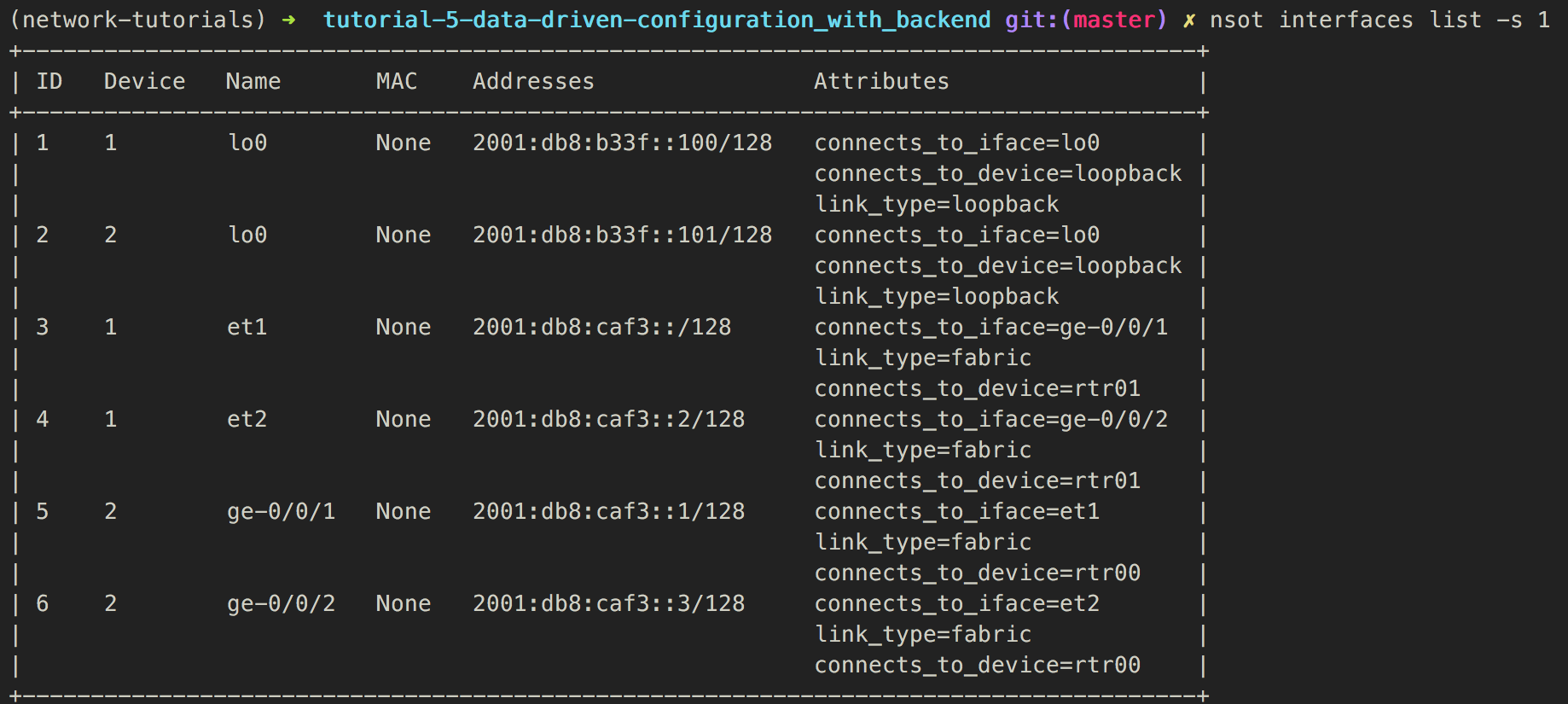

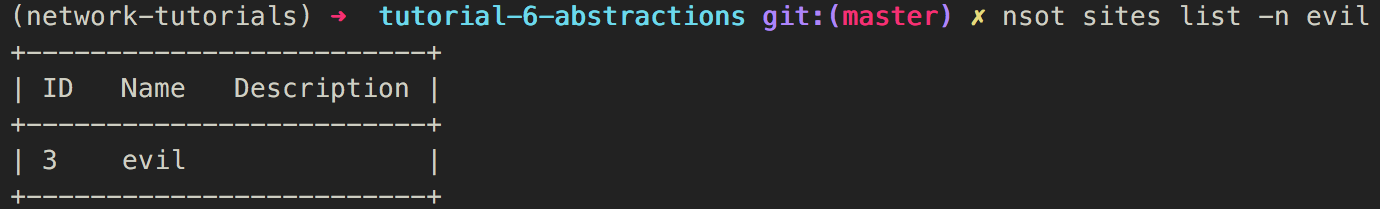

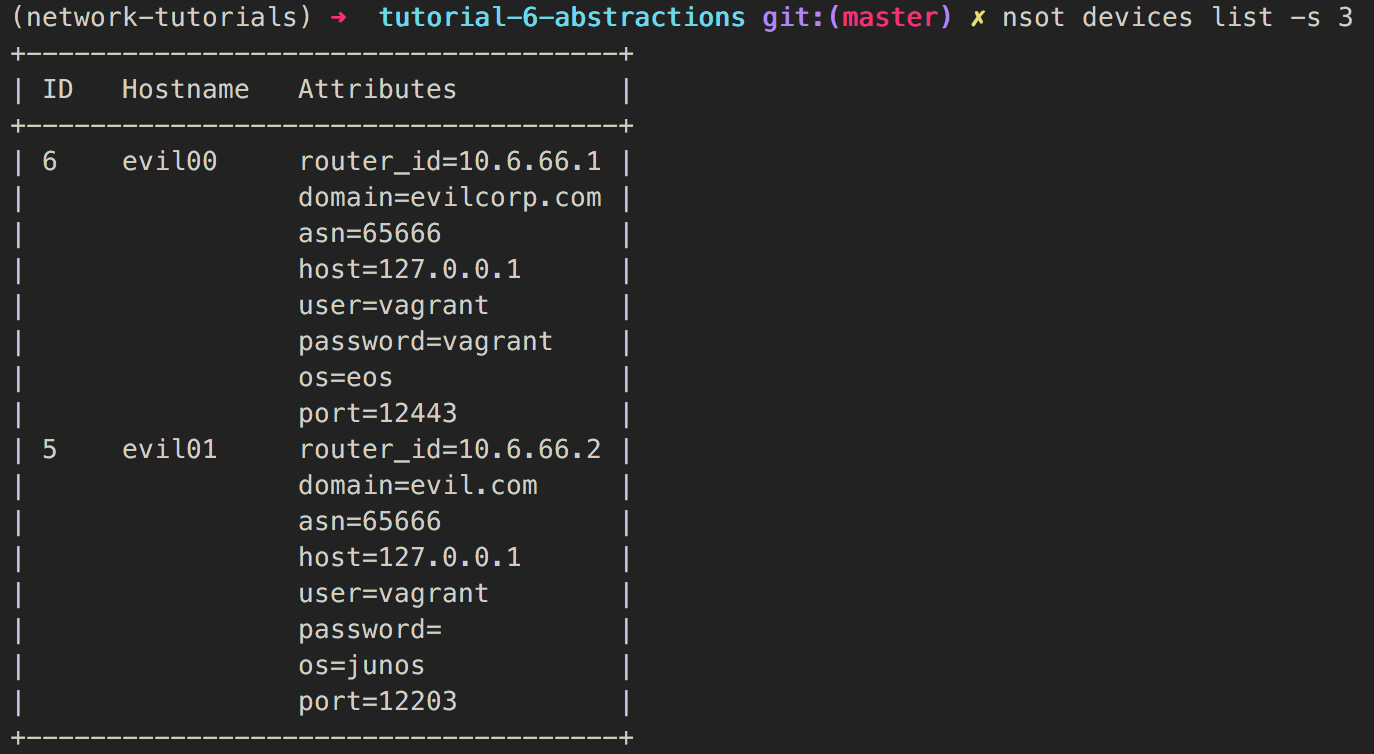

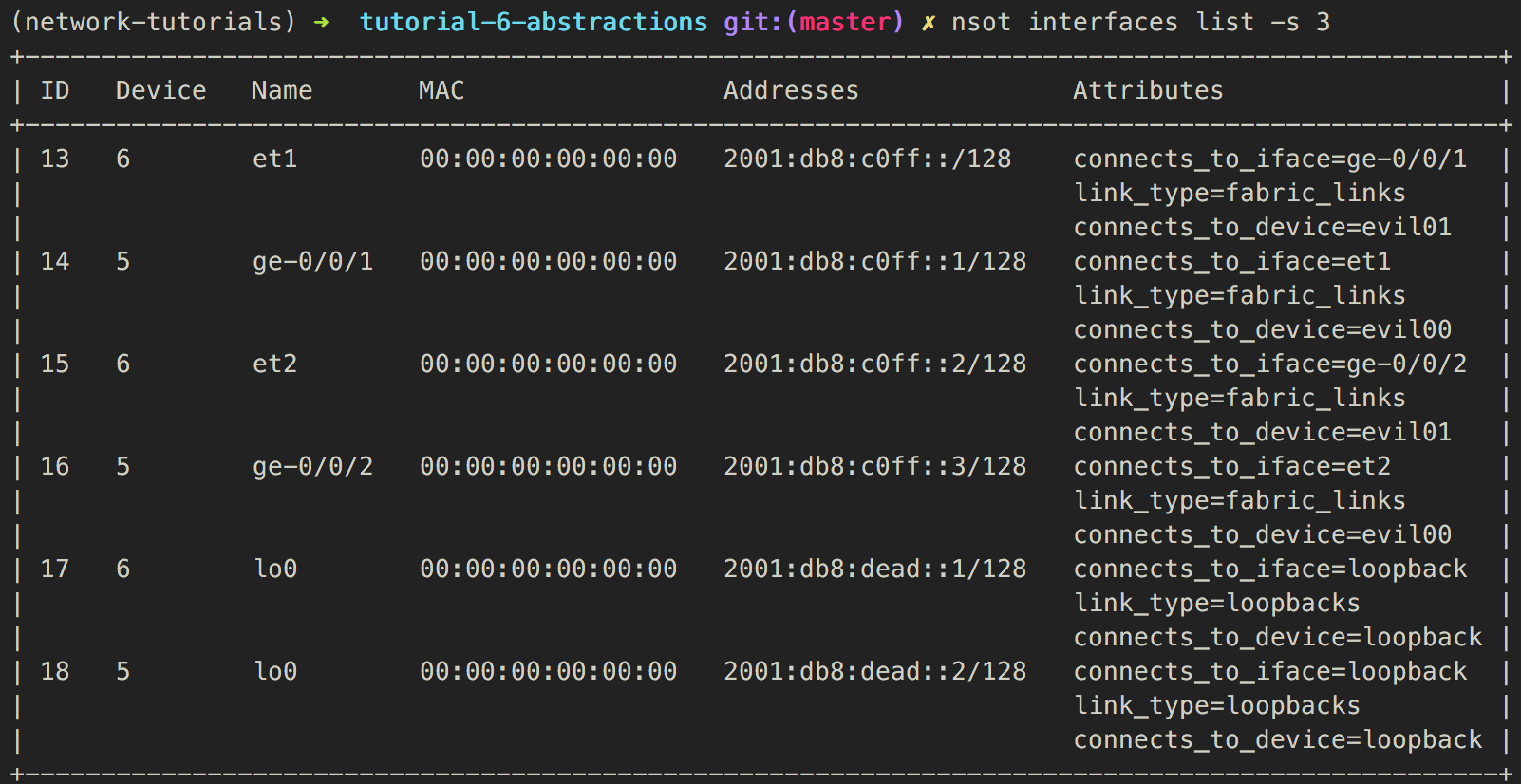

DDB - Before we begin - Verify the data (1)

DDB - Before we begin - Verify the data (2)

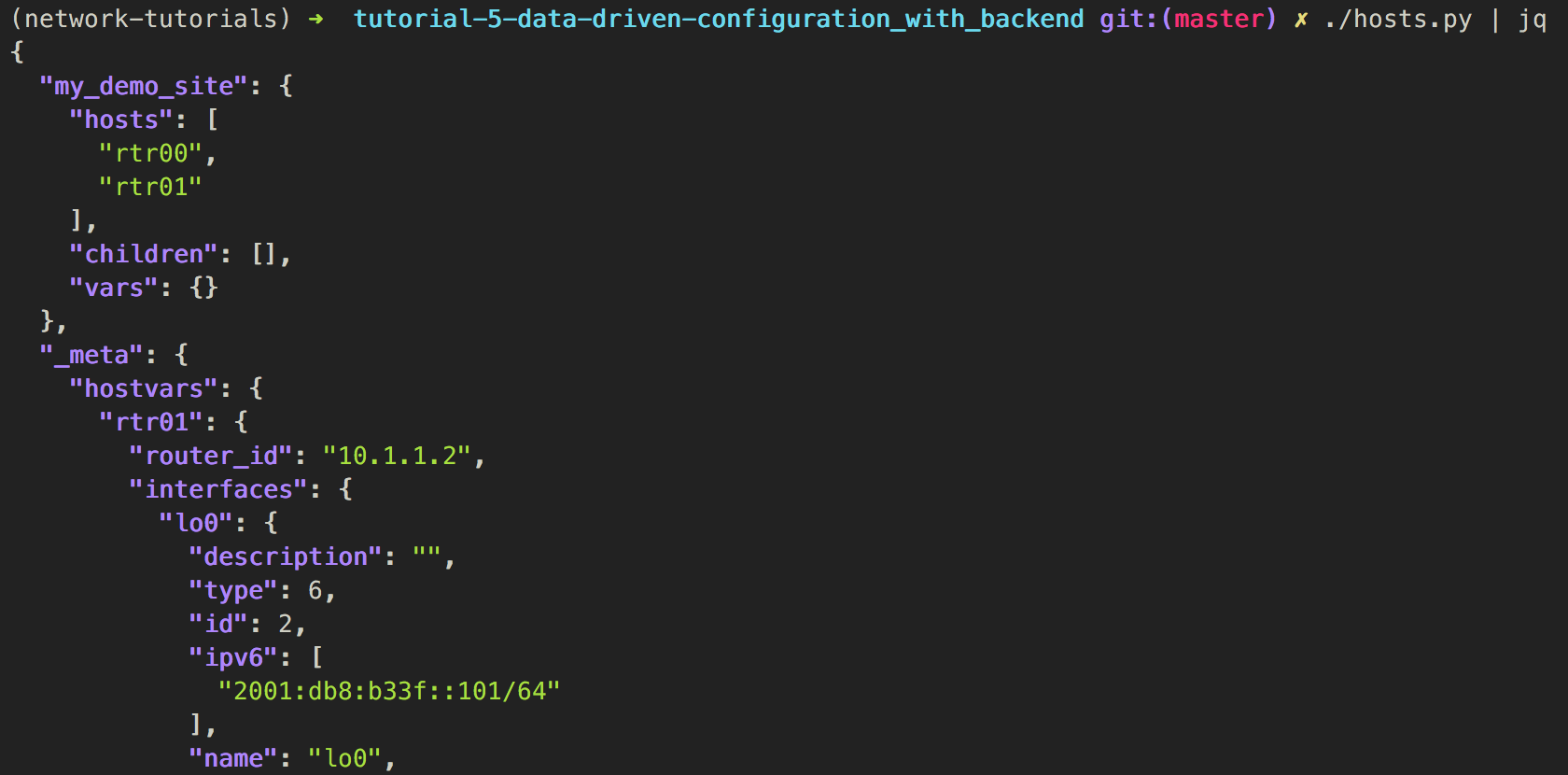

DDB - backend integration - inventory

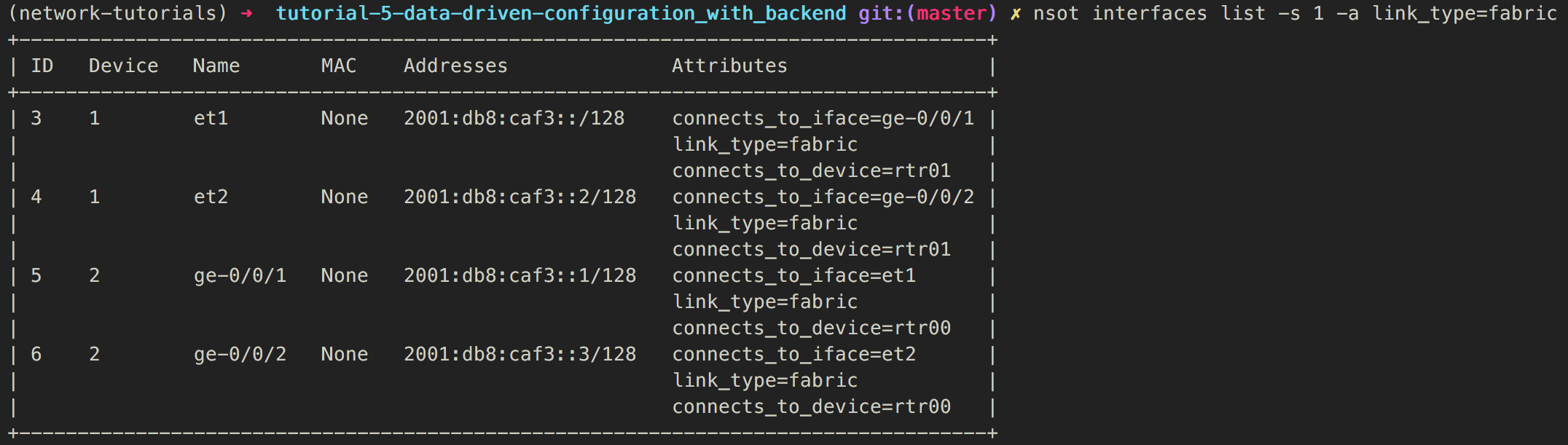

DDB - backend integration - Smarter data

{% for iface, iface_data in ifaces.items() if iface_data.attributes.link_type == "fabric" %}

{% set peer =iface_data.attributes.connects_to_device %}

{% set peer_iface =iface_data.attributes.connects_to_iface %}

{% set peer_ip = hostvars[peer]['interfaces'][peer_iface]['ipv6'][0].split('/')[0] %}

{% set peer_asn = hostvars[peer]['asn'] %}

neighbor {{ peer_ip }} remote-as {{ peer_asn }}

neighbor {{ peer_ip }} description {{ peer }}:{{ peer_iface }}

address-family ipv6

neighbor {{ peer_ip }} activate

{% endfor %}

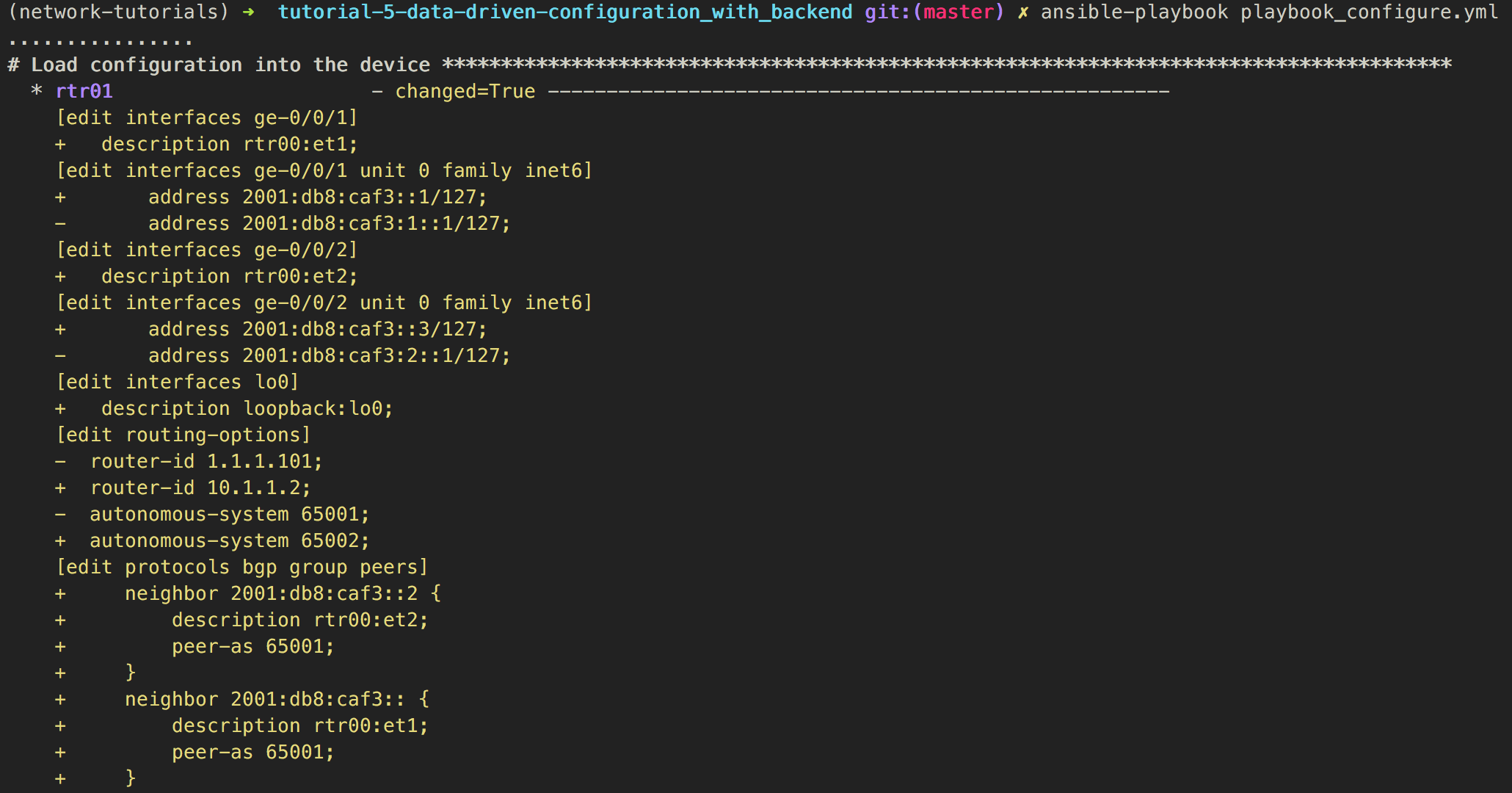

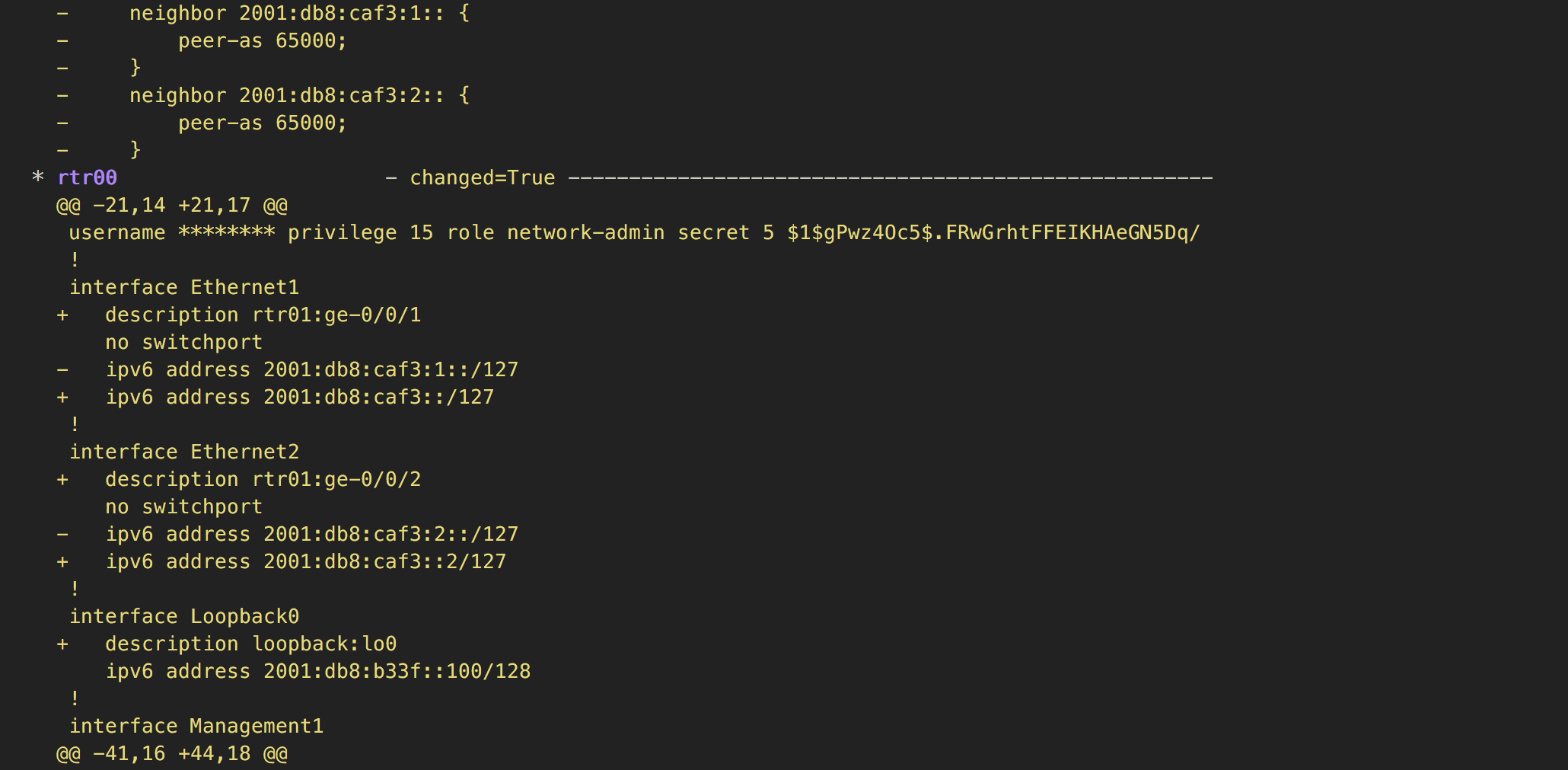

DDB - Configuration - Run (1)

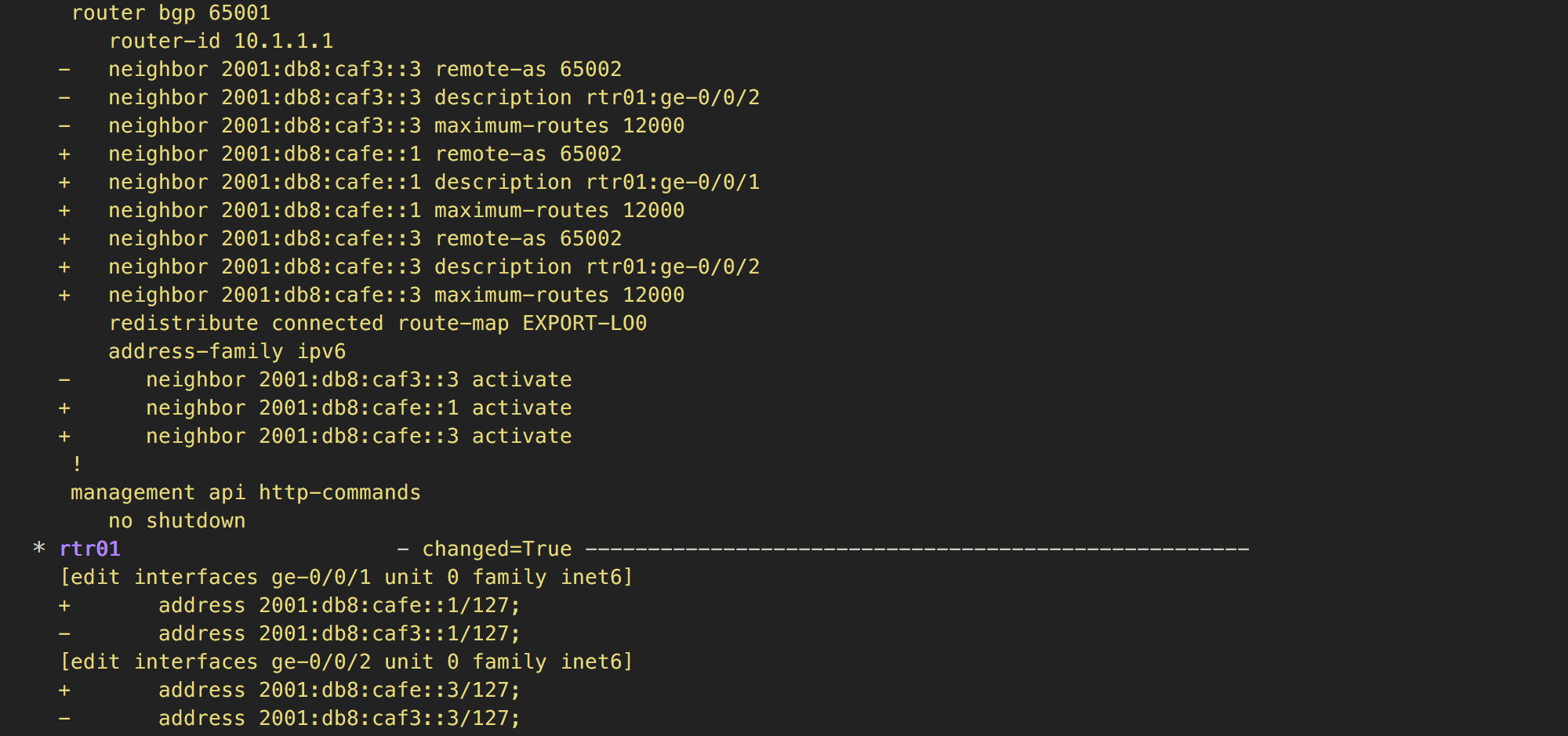

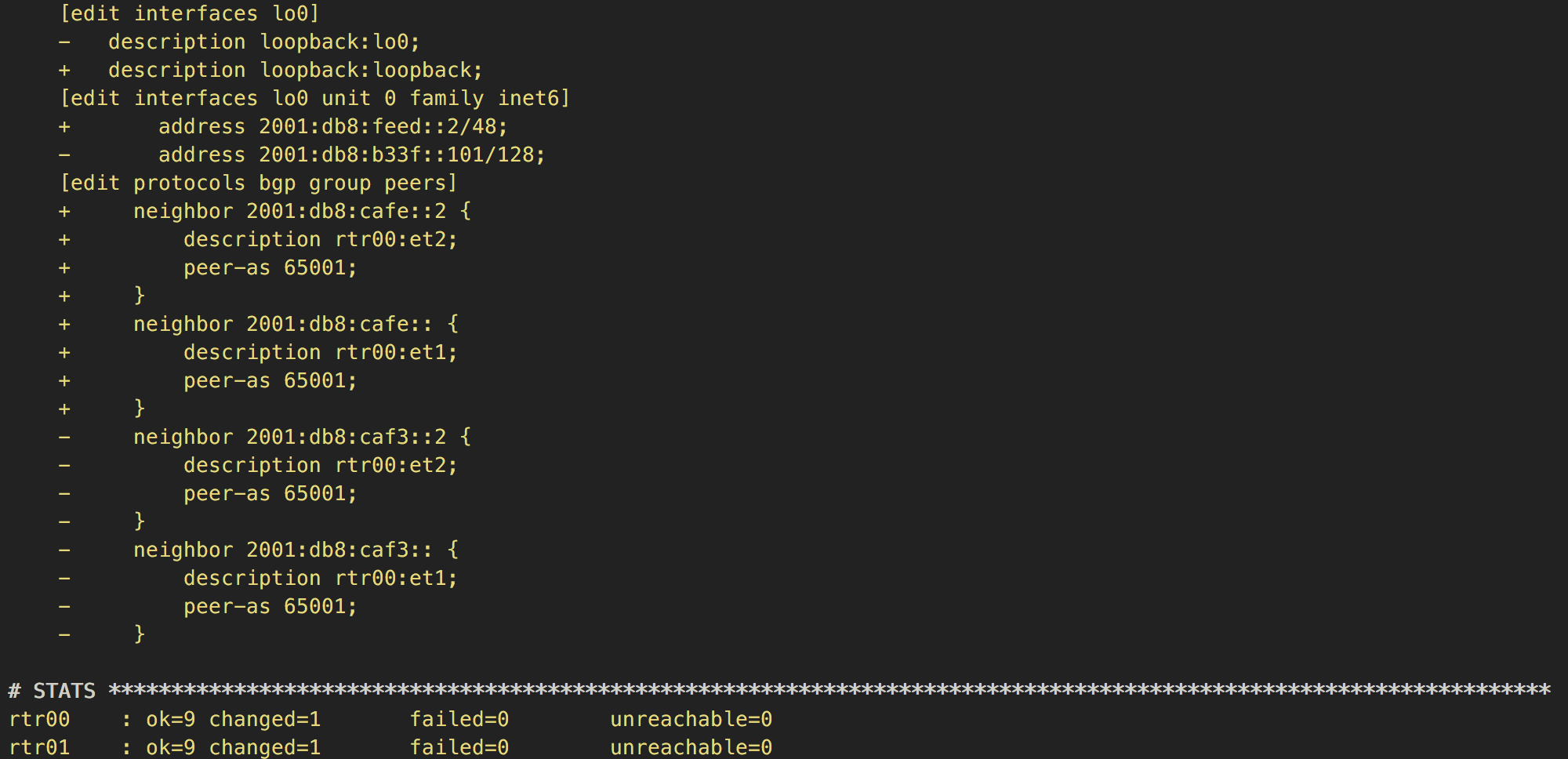

DDB - Configuration - Run (2)

DDB - Configuration - Run (3)

DDB - Verifying - Playbook (1)

- name: "Get facts from device"

napalm_get_facts:

hostname: "{{ host }}"

username: "{{ user }}"

dev_os: "{{ os }}"

password: "{{ password }}"

optional_args:

port: "{{ port }}"

filter: ['bgp_neighbors']

register: napalm_facts

DDB - Verifying - Playbook (2)

- name: "Check all BGP sessions are configured"

assert:

that:

- hostvars[item.value.attributes.connects_to_device]['interfaces'][item.value.attributes.connects_to_iface]['ipv6'][0].split('/')[0] in napalm_facts.ansible_facts.bgp_neighbors.global.peers

msg: "{{ '{}:{}'.format(item.value.attributes.connects_to_iface, item.value.attributes.connects_to_device) }} ({{ hostvars[item.value.attributes.connects_to_device]['interfaces'][item.value.attributes.connects_to_iface]['ipv6'][0].split('/')[0] }}) is missing"

with_dict: "{{ interfaces }}"

when: "{{ item.value.attributes.link_type == 'fabric' }}"

tags: [print_action]

hostvars[item.value.attributes.connects_to_device]['interfaces']\

[item.value.attributes.connects_to_iface]['ipv6'][0].split('/')[0]\

in napalm_facts.ansible_facts.bgp_neighbors.global.peers

item.value # interface

interface.attributes.connects_to_device # peer

interface.attributes.connects_to_iface # peer_iface

napalm_facts.ansible_facts.bgp_neighbors.global.peers # my_configured_peers

hostvars[peer]['interfaces'][peer_iface]['ipv6'] in my_configured_peers

DDB - Verifying - Playbook (3)

- name: "Check BGP sessions are healthy"

assert:

that:

- napalm_facts.ansible_facts.bgp_neighbors.global.peers[hostvars[item.value.attributes.connects_to_device]['interfaces'][item.value.attributes.connects_to_iface]['ipv6'][0].split('/')[0]].is_up

msg: "{{ '{}:{}'.format(item.value.attributes.connects_to_iface, item.value.attributes.connects_to_device) }} ({{ hostvars[item.value.attributes.connects_to_device]['interfaces'][item.value.attributes.connects_to_iface]['ipv6'][0].split('/')[0] }}) is configured but down"

with_dict: "{{ interfaces }}"

when: "{{ item.value.attributes.link_type == 'fabric' }}"

tags: [print_action]

- name: "Chack BGP sessions are receiving prefixes"

assert:

that:

- napalm_facts.ansible_facts.bgp_neighbors.global.peers[hostvars[item.value.attributes.connects_to_device]['interfaces'][item.value.attributes.connects_to_iface]['ipv6'][0].split('/')[0]].address_family.ipv6.received_prefixes > 0

msg: "{{ '{}:{}'.format(item.value.attributes.connects_to_iface, item.value.attributes.connects_to_device) }} ({{ hostvars[item.value.attributes.connects_to_device]['interfaces'][item.value.attributes.connects_to_iface]['ipv6'][0].split('/')[0] }}) is not receiving any prefixes"

with_dict: "{{ interfaces }}"

when: "{{ item.value.attributes.link_type == 'fabric' }}"

tags: [print_action]

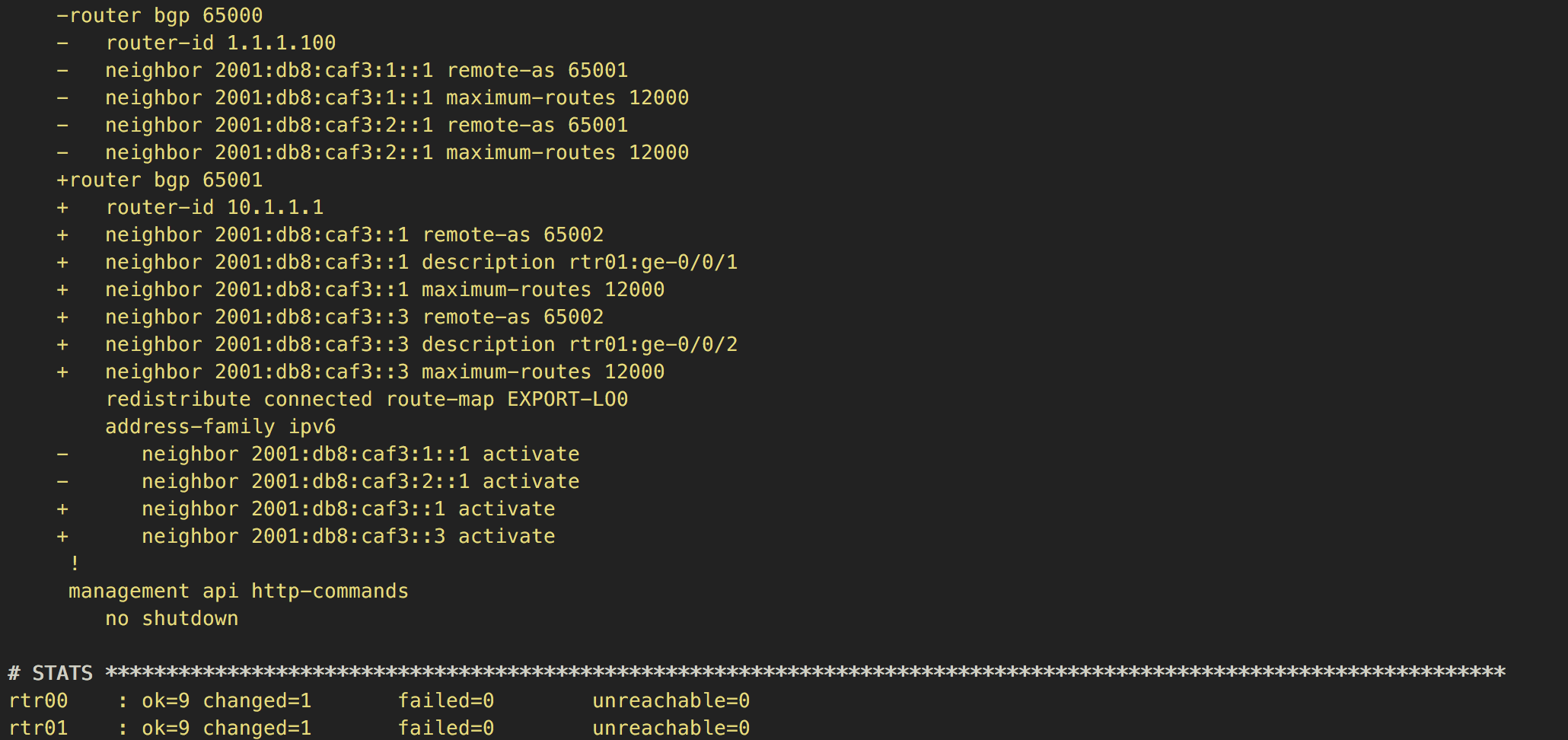

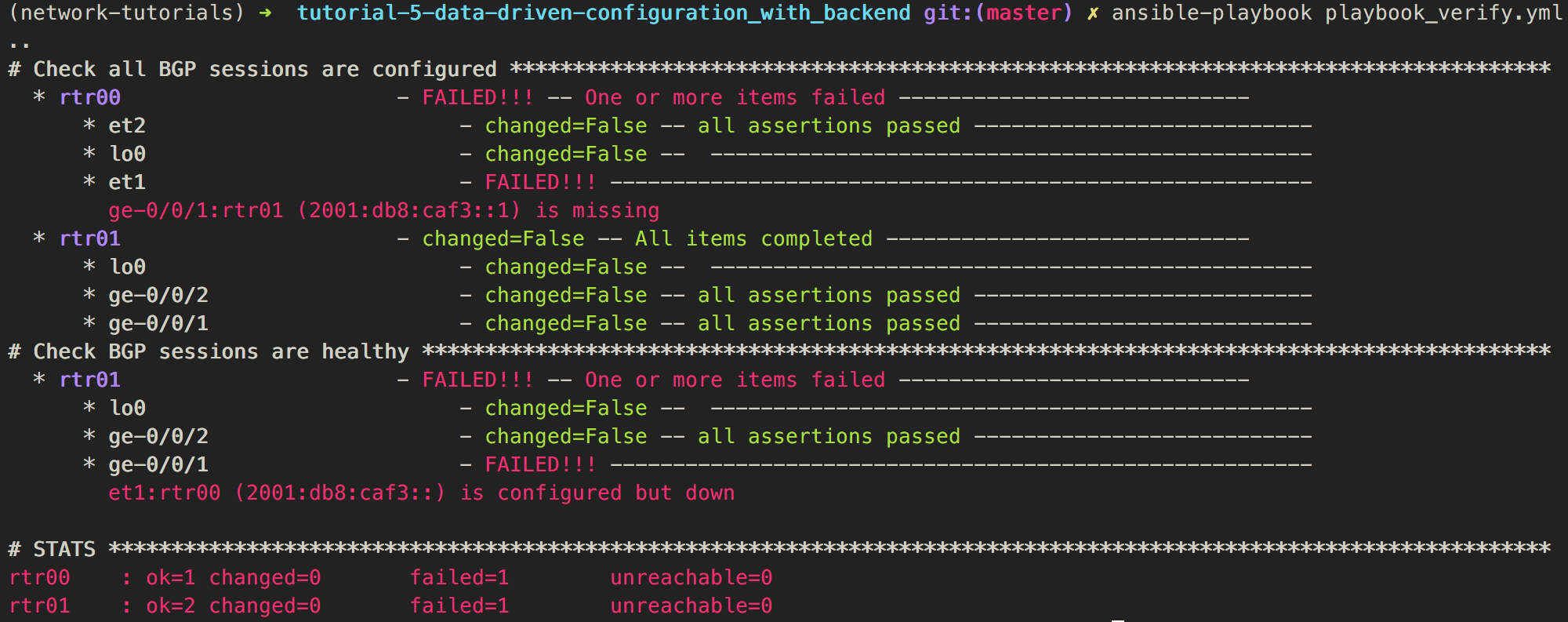

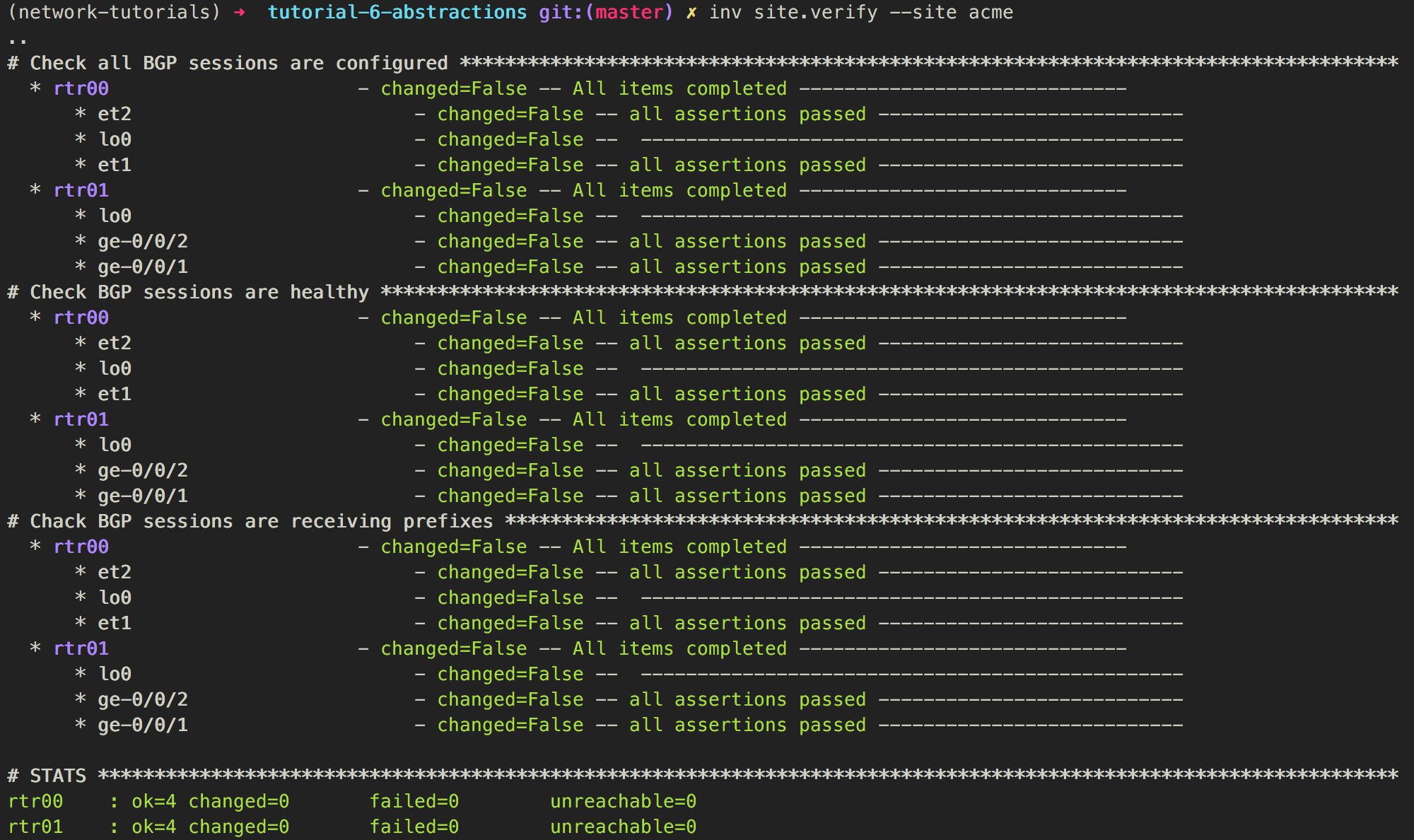

DDB - Verifying - Run (1)

We verify links, not BGP sessions

We verify links, not BGP sessions

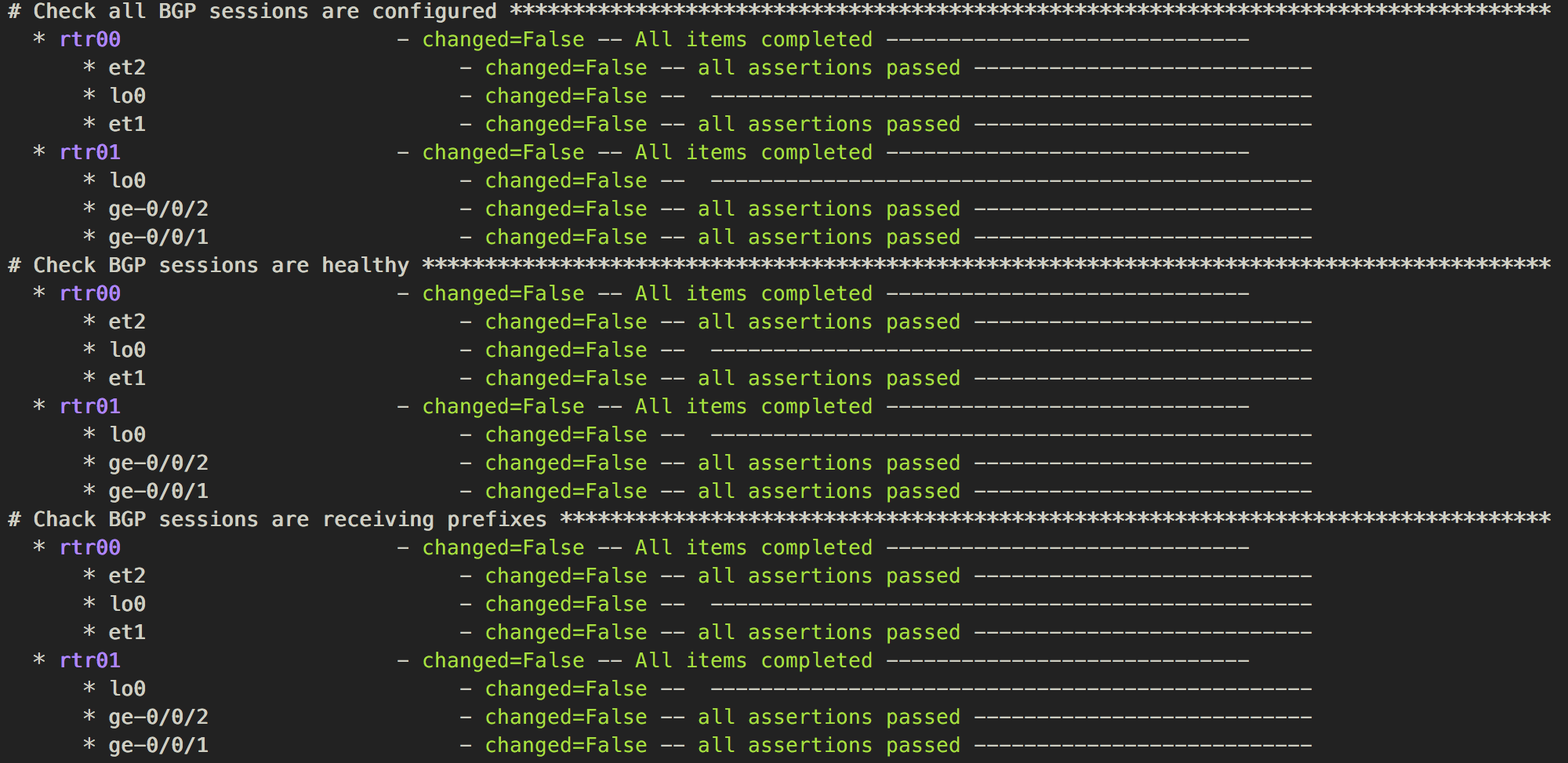

DDB - Verifying - Run (2)

We can correlate BGP sessions with interfaces easily. That might allows us to infer the reason behind the errors. For example, in the picture above it's easy to identify that the reason why

We can correlate BGP sessions with interfaces easily. That might allows us to infer the reason behind the errors. For example, in the picture above it's easy to identify that the reason why 2001:db8:caf3:: is down in rtr01 it's because 2001:db8:caf3::1 is not configured in rtr00.

Summary

- We replaced all those

YAMLfiles with a backend (nsot) - We added richer data to

nsotthat we previously had - We leveraged on the richer data to build the configurations with less information (BGP peers were inferred)

- We leveraged on the richer data to help diagnose problems

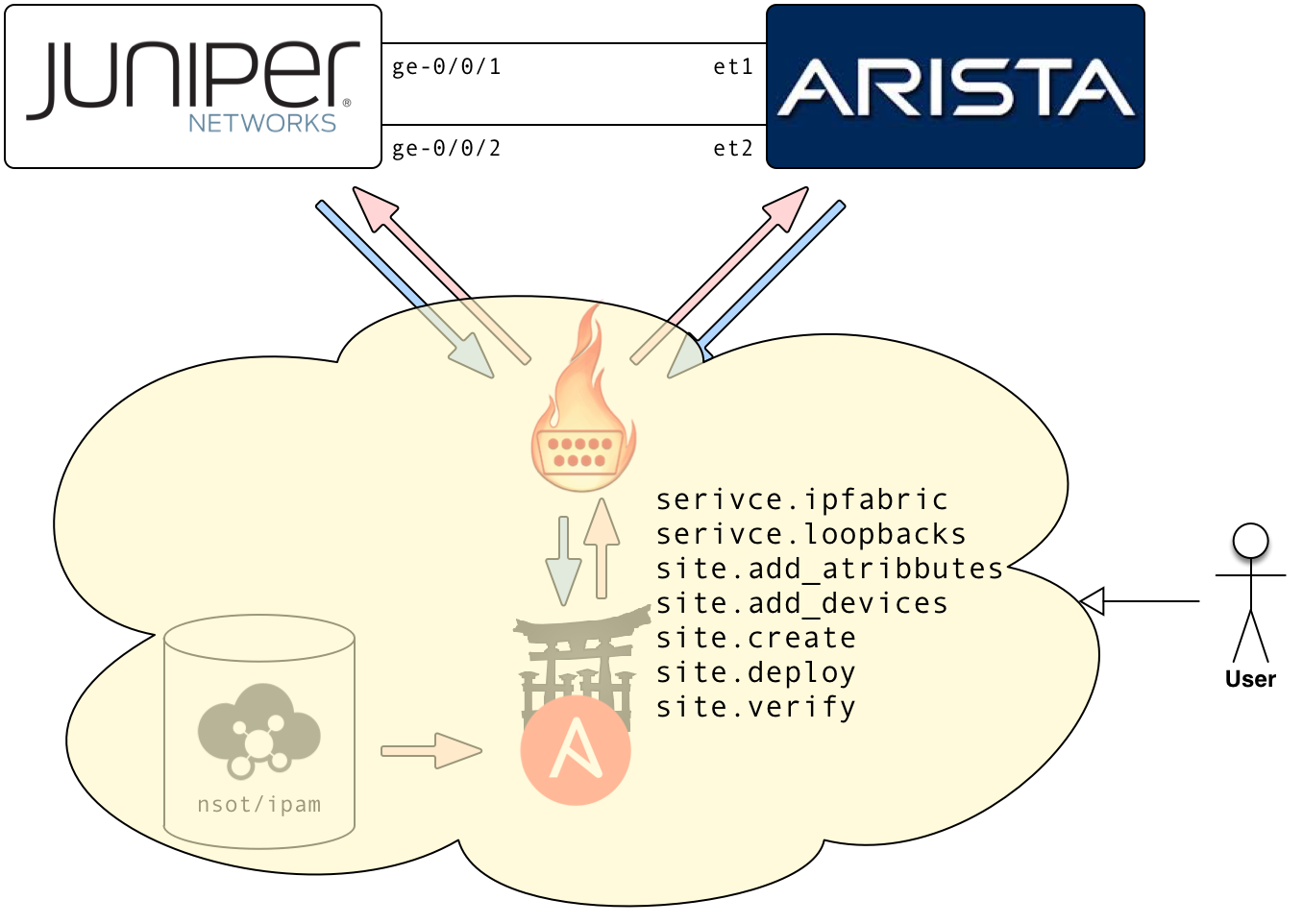

6 - Abstractions

Link to the code on githubObjectives

We are going to simplify operations. Sites and services will be abstracted and defined in simple definition files and added to the backend using an easy to use tool. Finally, ansible will be hidden behind the same tool.

ABSTRACTIONS - Site definition - Acme

data/acme/devices.yml

---

devices:

rtr00:

os: eos

host: 127.0.0.1

domain: acme.com

user: vagrant

password: vagrant

port: '12443'

asn: '65001'

router_id: 10.1.1.1

rtr01:

os: junos

host: 127.0.0.1

domain: acme.com

user: vagrant

password: ''

port: '12203'

asn: '65002'

router_id: 10.1.1.2

data/acme/attributes.yml

---

attributes:

Device:

os: {}

host: {}

user: {}

password:

constraints:

allow_empty: true

port: {}

asn: {}

router_id: {}

domain: {}

Network:

type: {}

service: {}

Interface:

link_type: {}

connects_to_device: {}

connects_to_iface: {}

data/acme/services.yml

---

loopbacks:

network_ranges:

loopbacks: 2001:db8:feed::/48

definition: {}

ipfabric:

network_ranges:

fabric_links: 2001:db8:cafe::/48

definition:

links:

- left_device: rtr00

left_iface: et1

right_device: rtr01

right_iface: ge-0/0/1

- left_device: rtr00

left_iface: et2

right_device: rtr01

right_iface: ge-0/0/2

ABSTRATIONS - Site definition - Evil

data/acme/devices.yml

---

devices:

evil00:

os: eos

host: 127.0.0.1

domain: evilcorp.com

user: vagrant

password: vagrant

port: '12443'

asn: '65666'

router_id: 10.6.66.1

evil01:

os: junos

host: 127.0.0.1

domain: evil.com

user: vagrant

password: ''

port: '12203'

asn: '65666'

router_id: 10.6.66.2

data/acme/attributes.yml

---

attributes:

Device:

os: {}

host: {}

user: {}

password:

constraints:

allow_empty: true

port: {}

asn: {}

router_id: {}

domain: {}

Network:

type: {}

service: {}

Interface:

link_type: {}

connects_to_device: {}

connects_to_iface: {}

data/acme/services.yml

---

loopbacks:

network_ranges:

loopbacks: 2001:db8:dead::/48

definition: {}

ipfabric:

network_ranges:

fabric_links: 2001:db8:c0ff::/48

definition:

links:

- left_device: rtr00

left_iface: et1

right_device: rtr01

right_iface: ge-0/0/1

- left_device: rtr00

left_iface: et2

right_device: rtr01

right_iface: ge-0/0/2

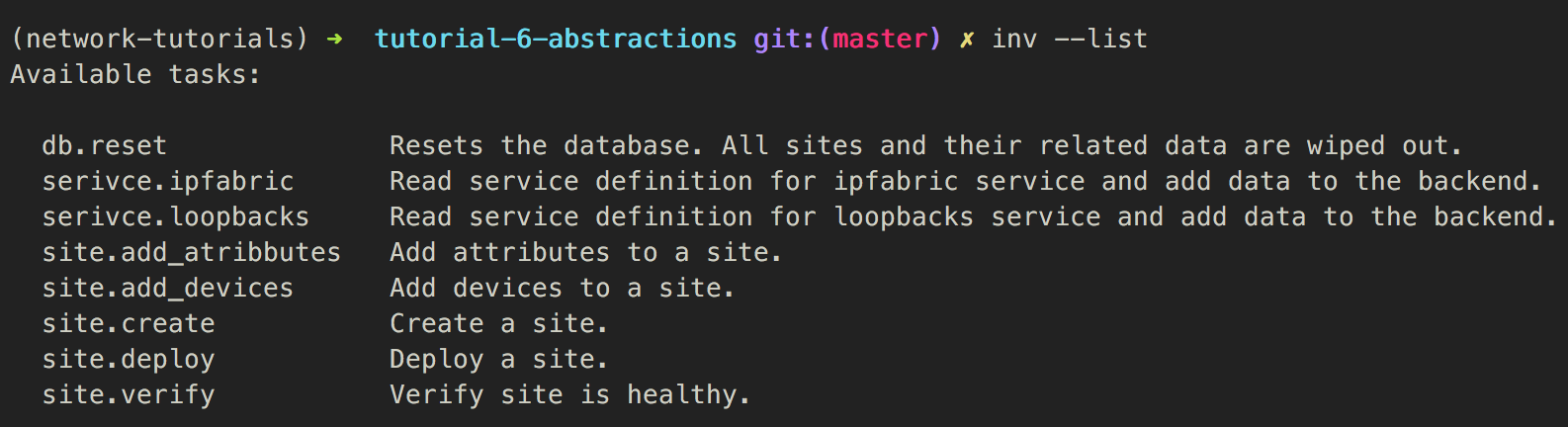

Abstractions - Command-line

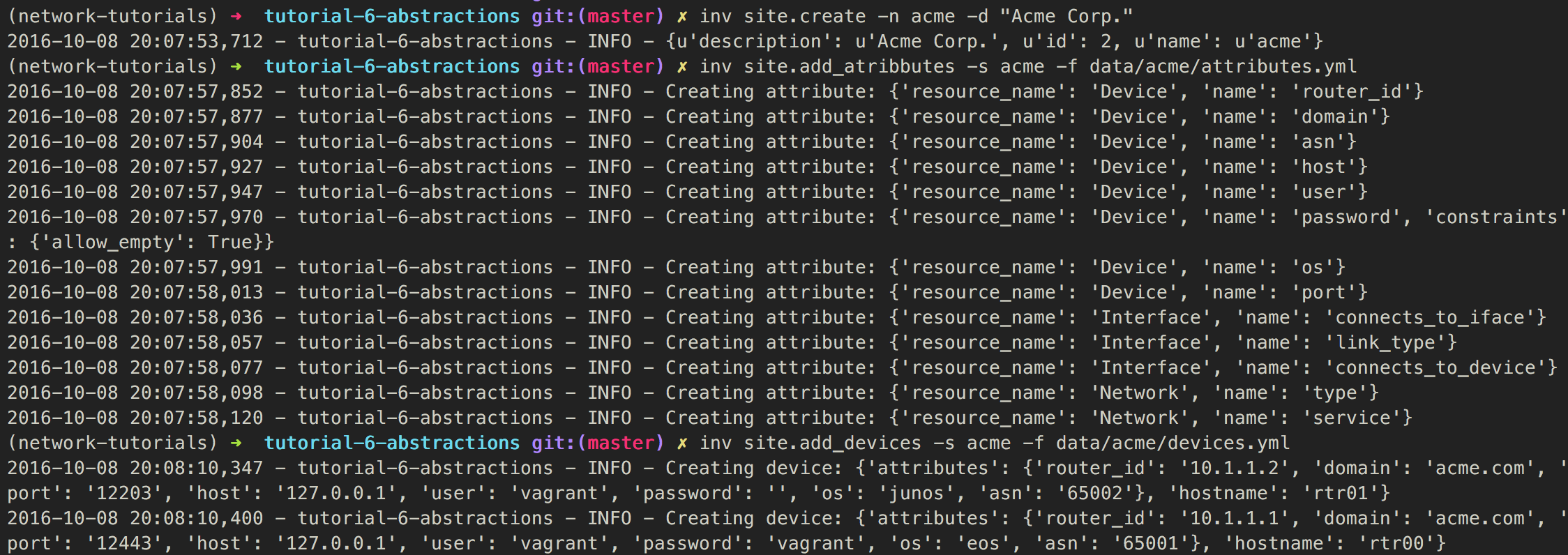

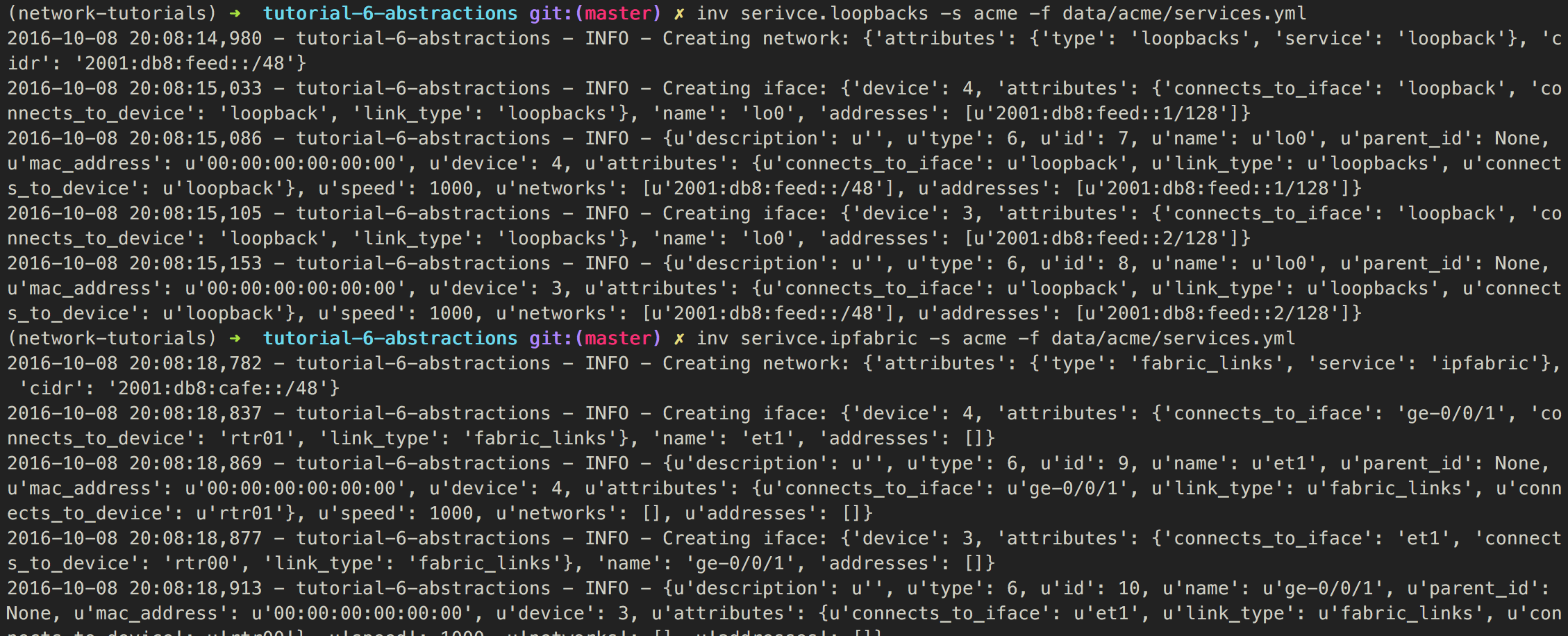

Abstractions - Creating the sites - Acme(1)

Abstractions - Creating the sites - Acme(2)

Abstractions - Creating the sites - Evil

inv site.create --name evil

inv site.add_atribbutes -s evil -f data/evil/attributes.yml

inv site.add_devices -s evil -f data/evil/devices.yml

inv serivce.loopbacks -s evil -f data/evil/services.yml

inv serivce.ipfabric -s evil -f data/evil/services.yml

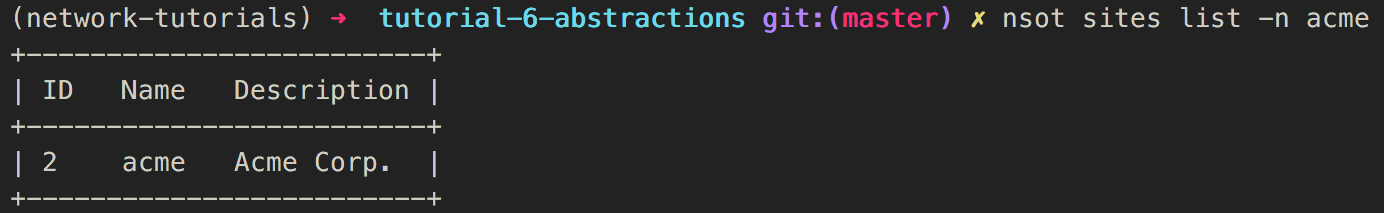

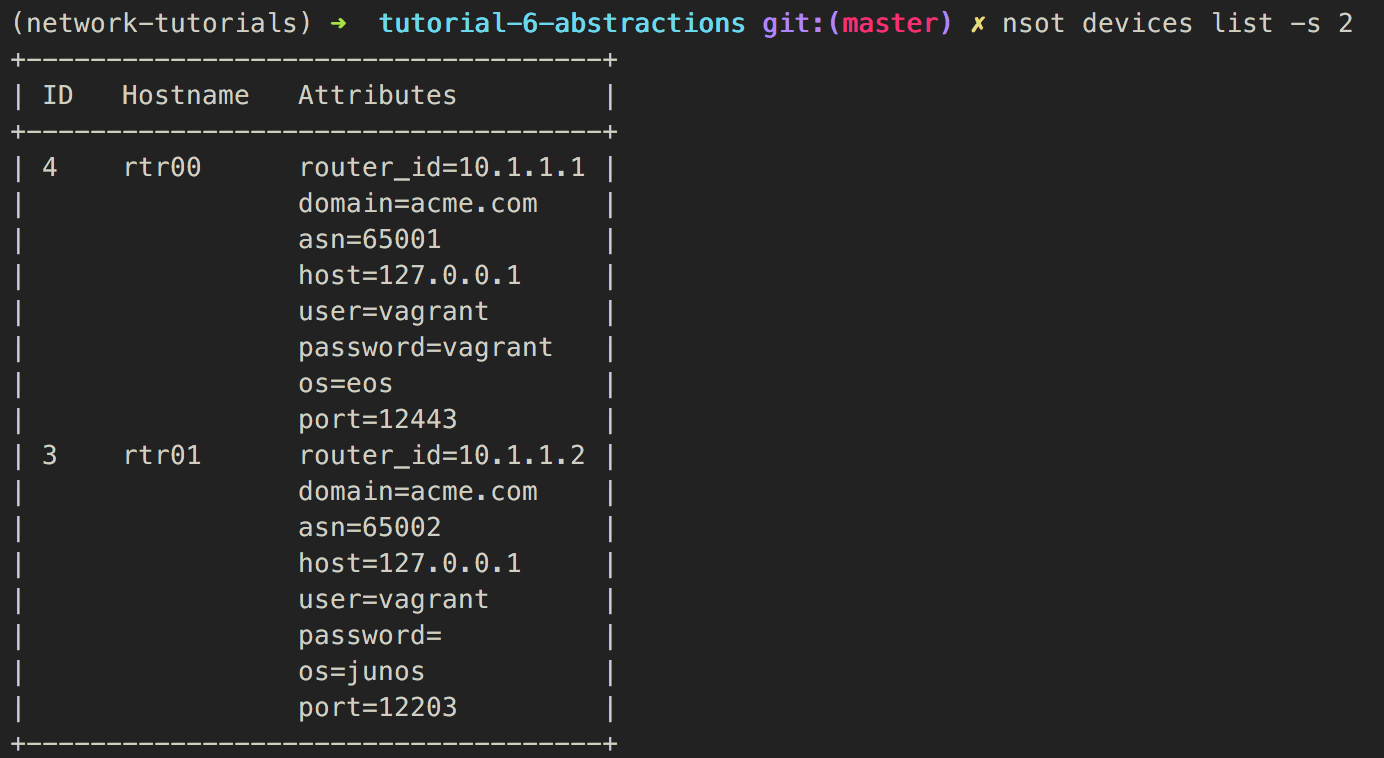

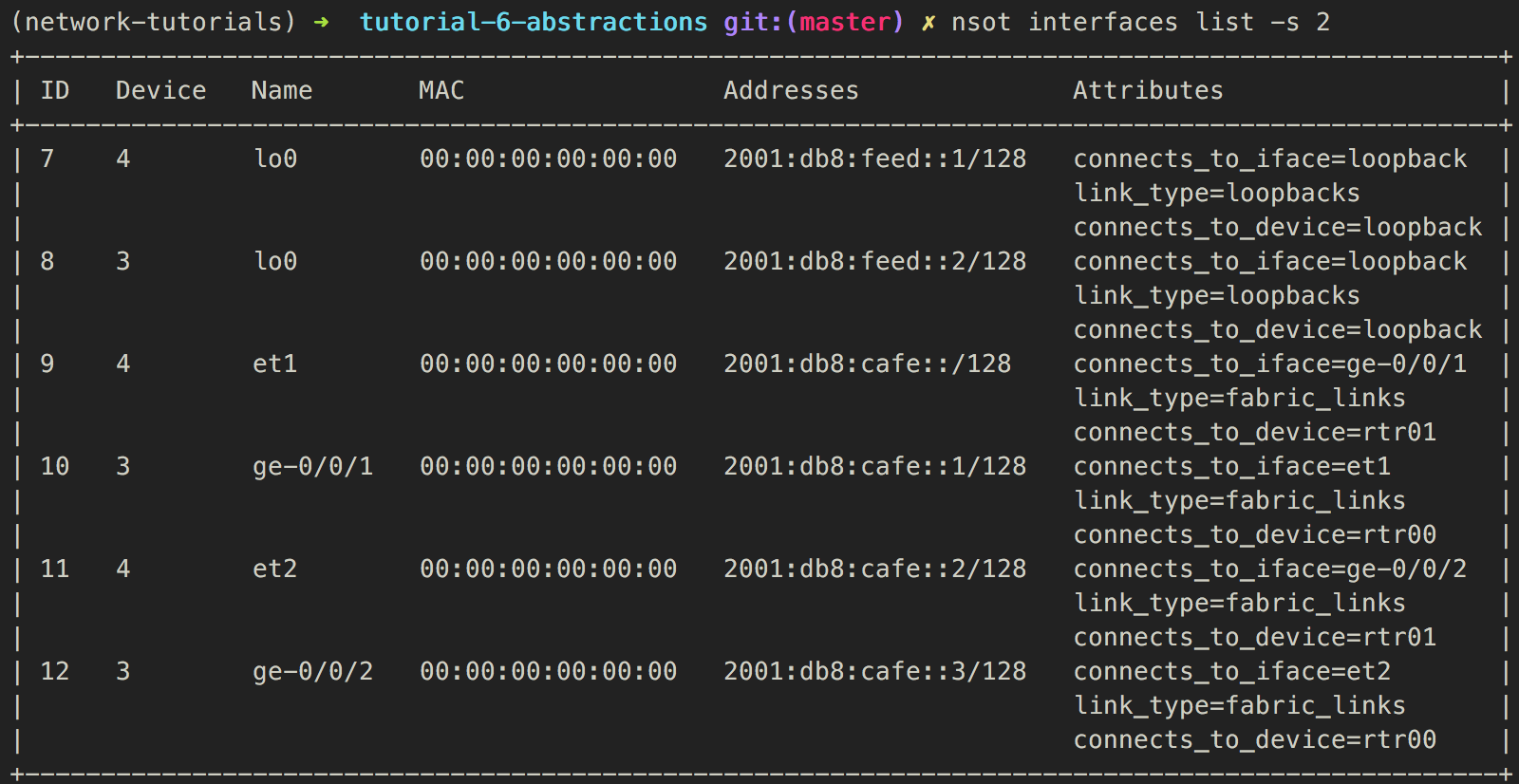

Abstractions - Verify data - Acme

For demonstrational purposes ;)

For demonstrational purposes ;)

Abstractions - Verify data - Evil

For demonstrational purposes ;)

For demonstrational purposes ;)

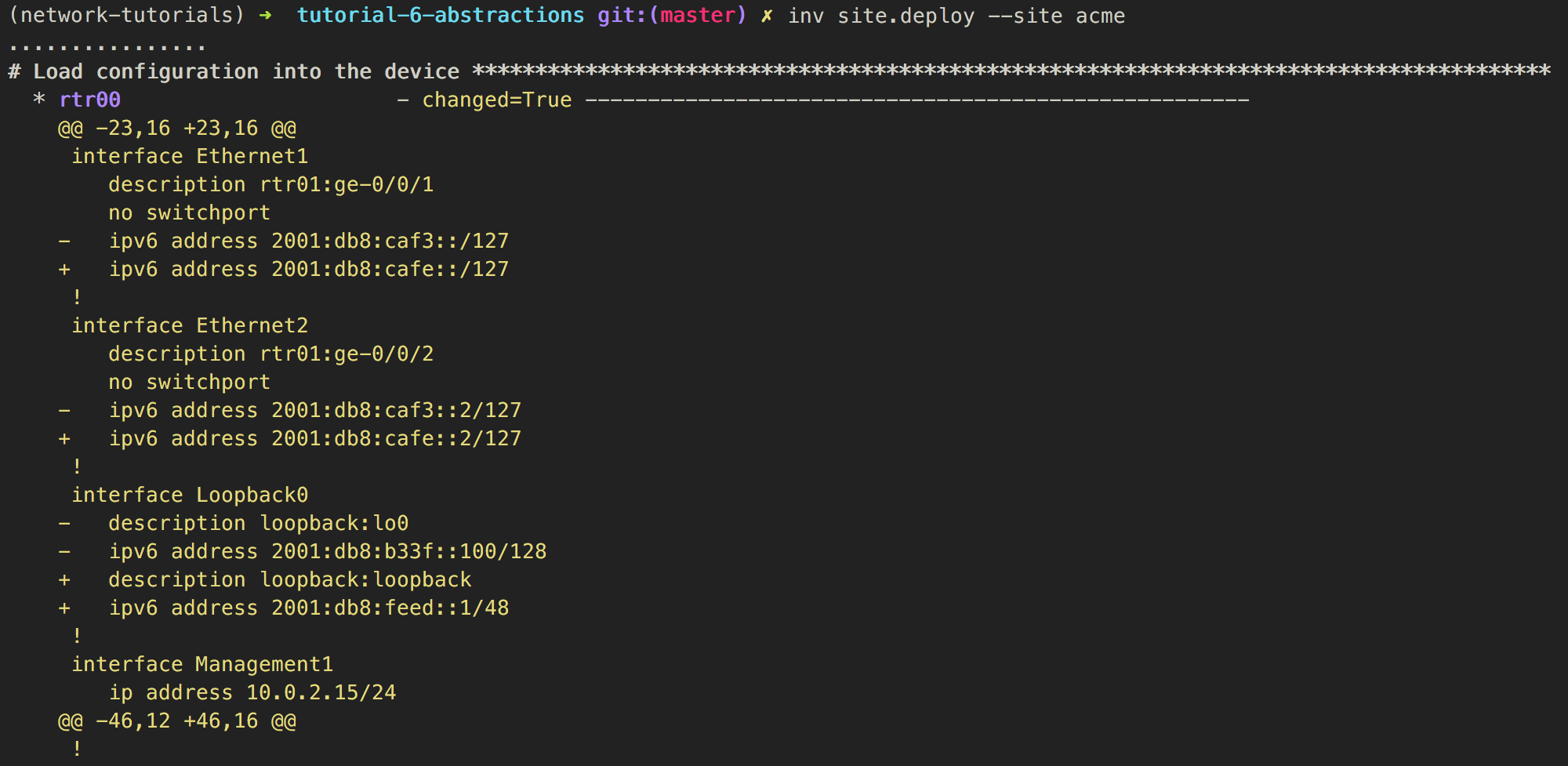

Abstractions - Deploy site - Acme (1)

Abstractions - Deploy site - Acme (2)

Abstractions - Deploy site - Acme (3)

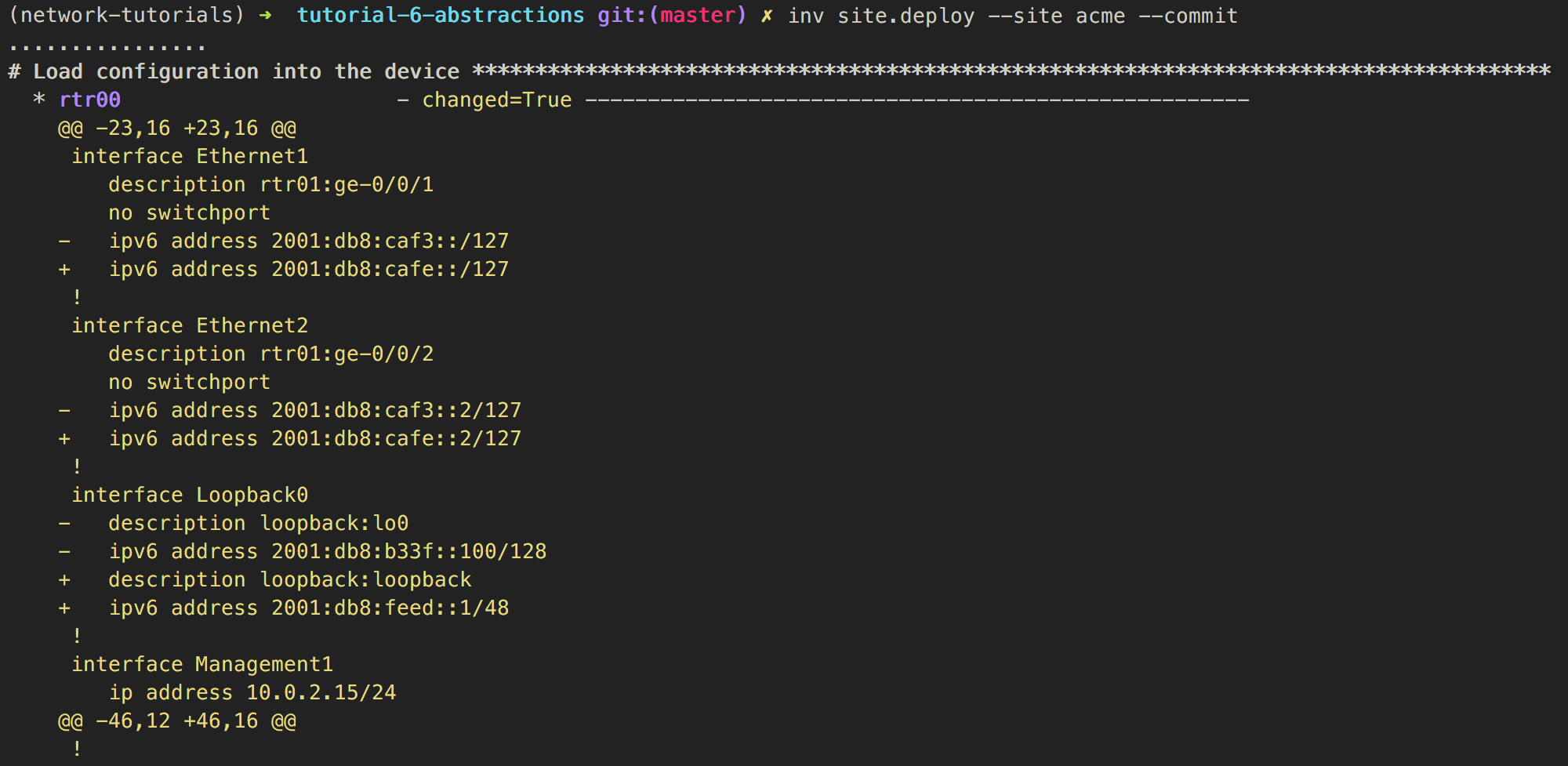

Abstractions - Deploy site - Acme (4)

Abstractions - Deploy site - Acme (5)

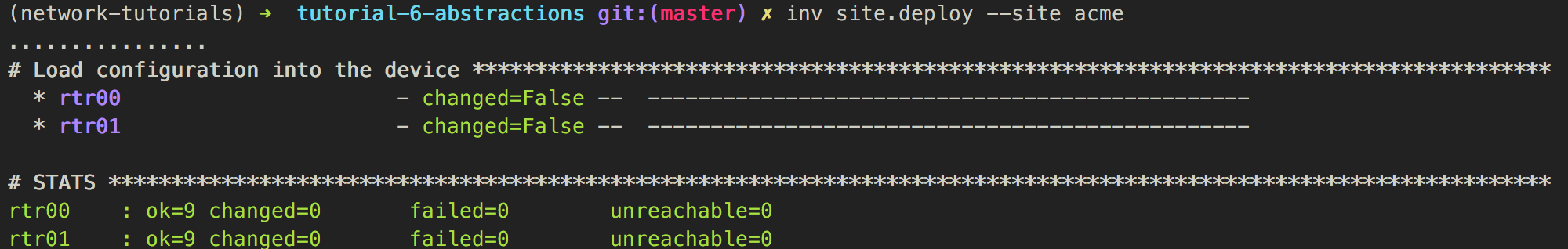

Abstractions - Verify site - Acme

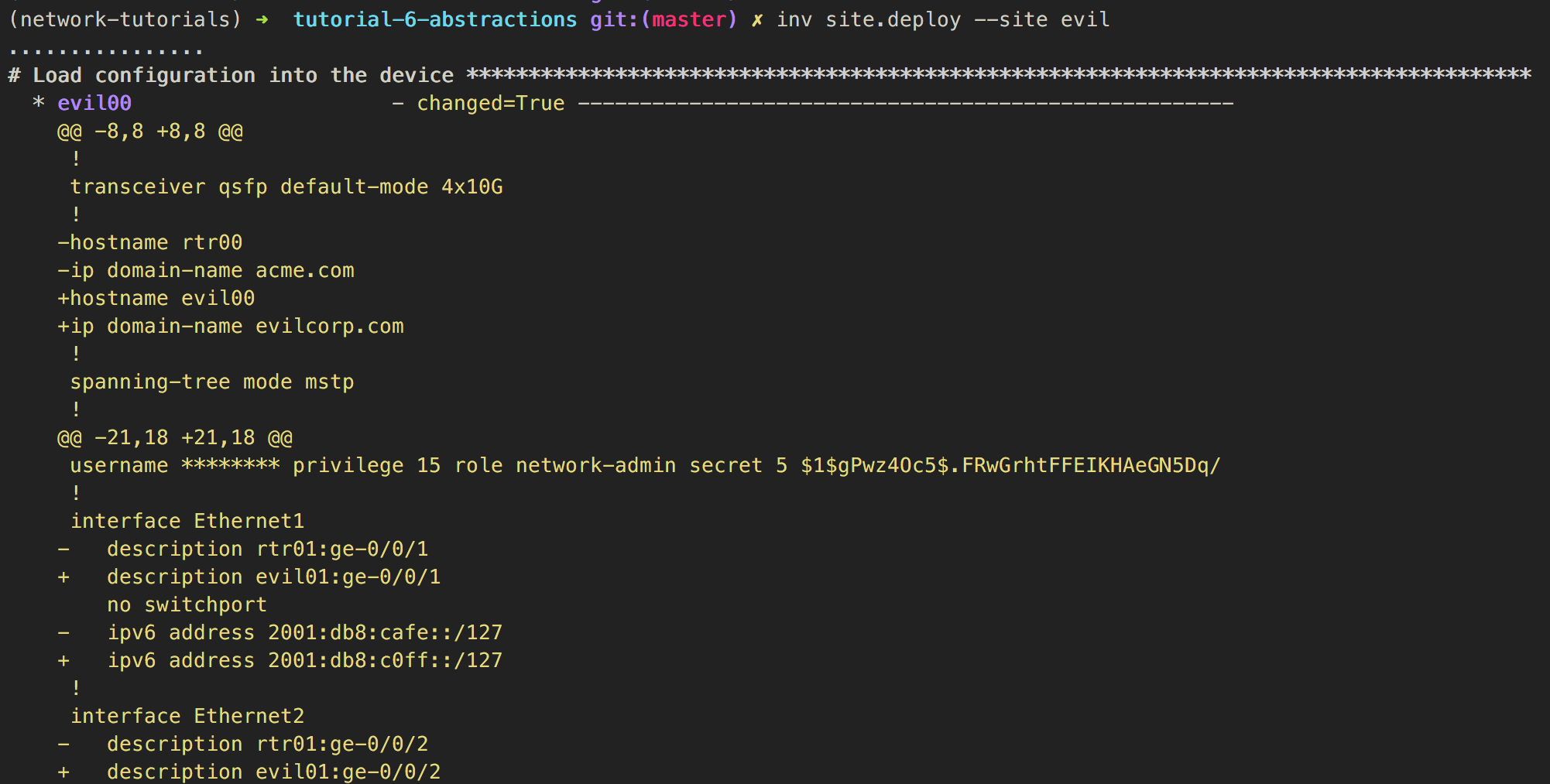

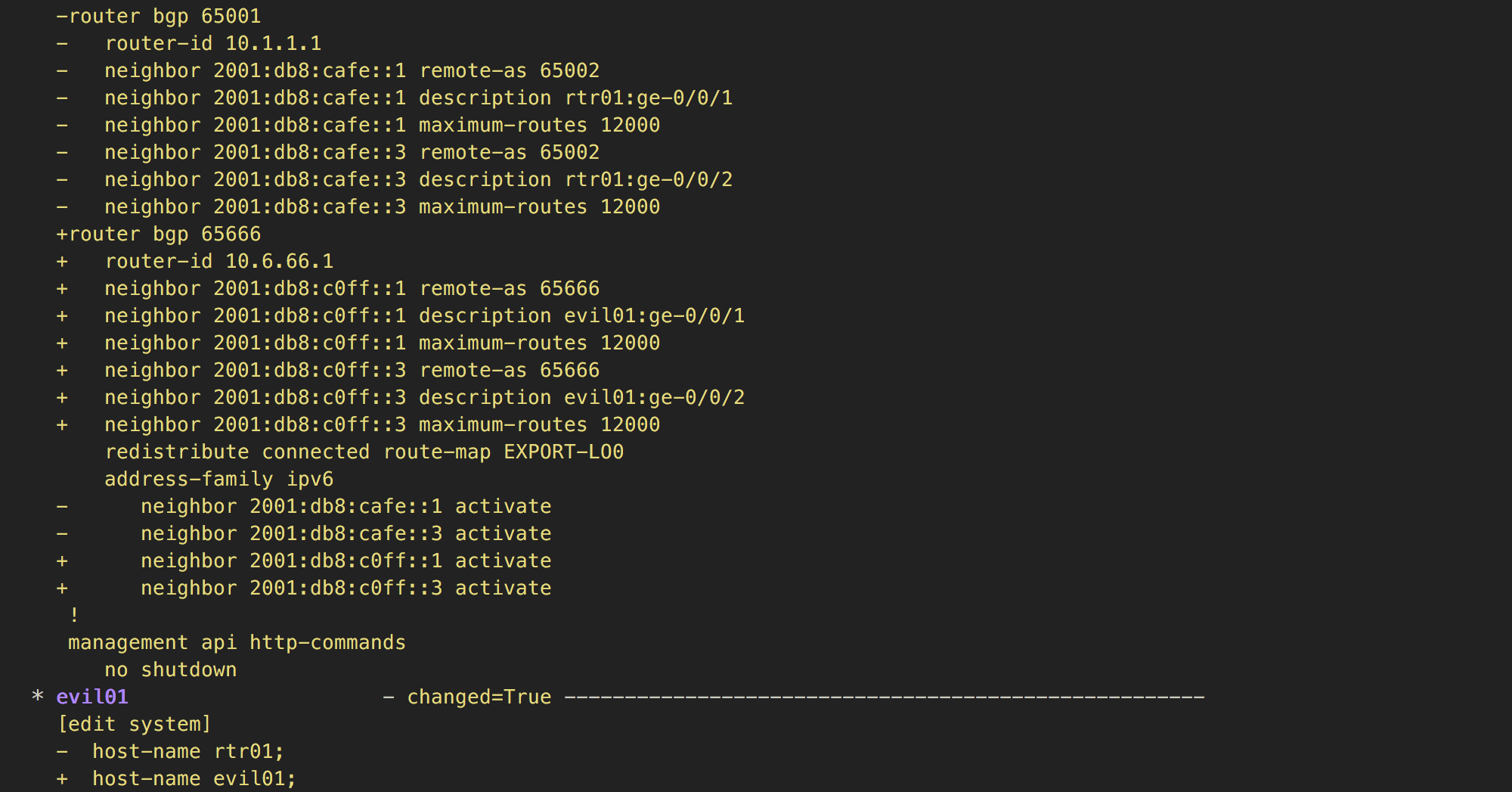

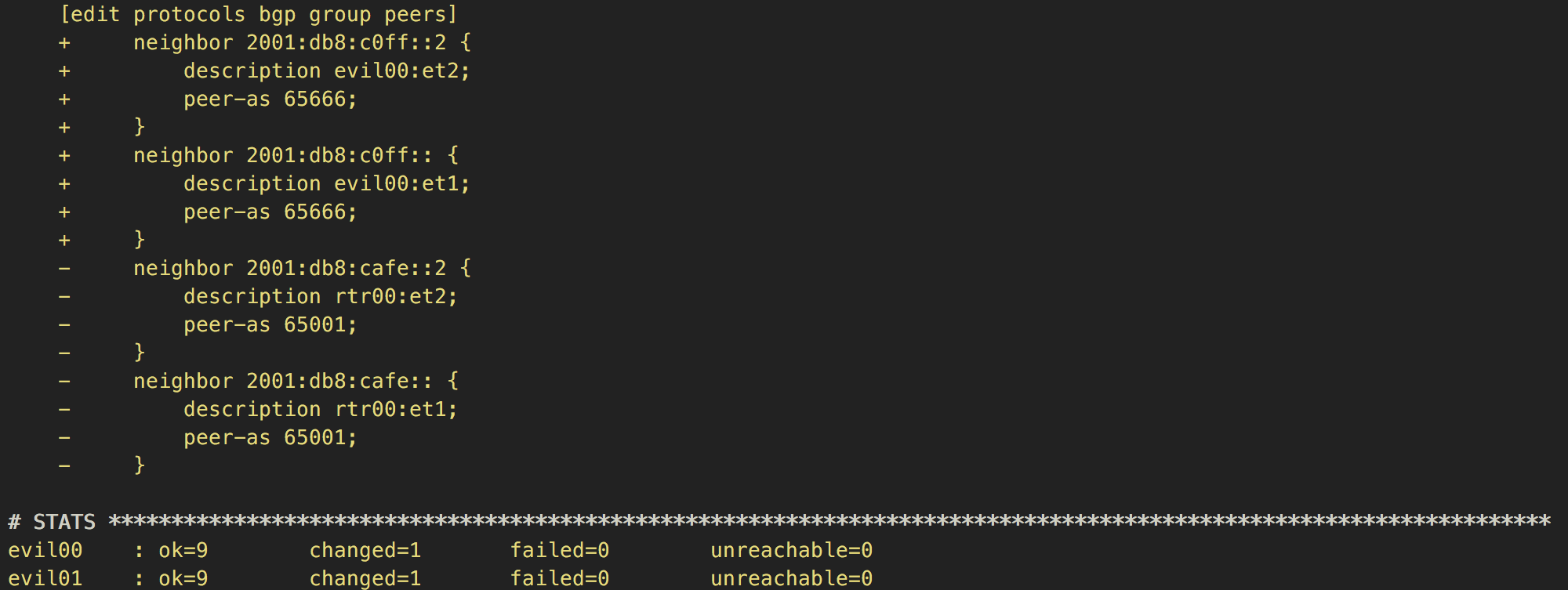

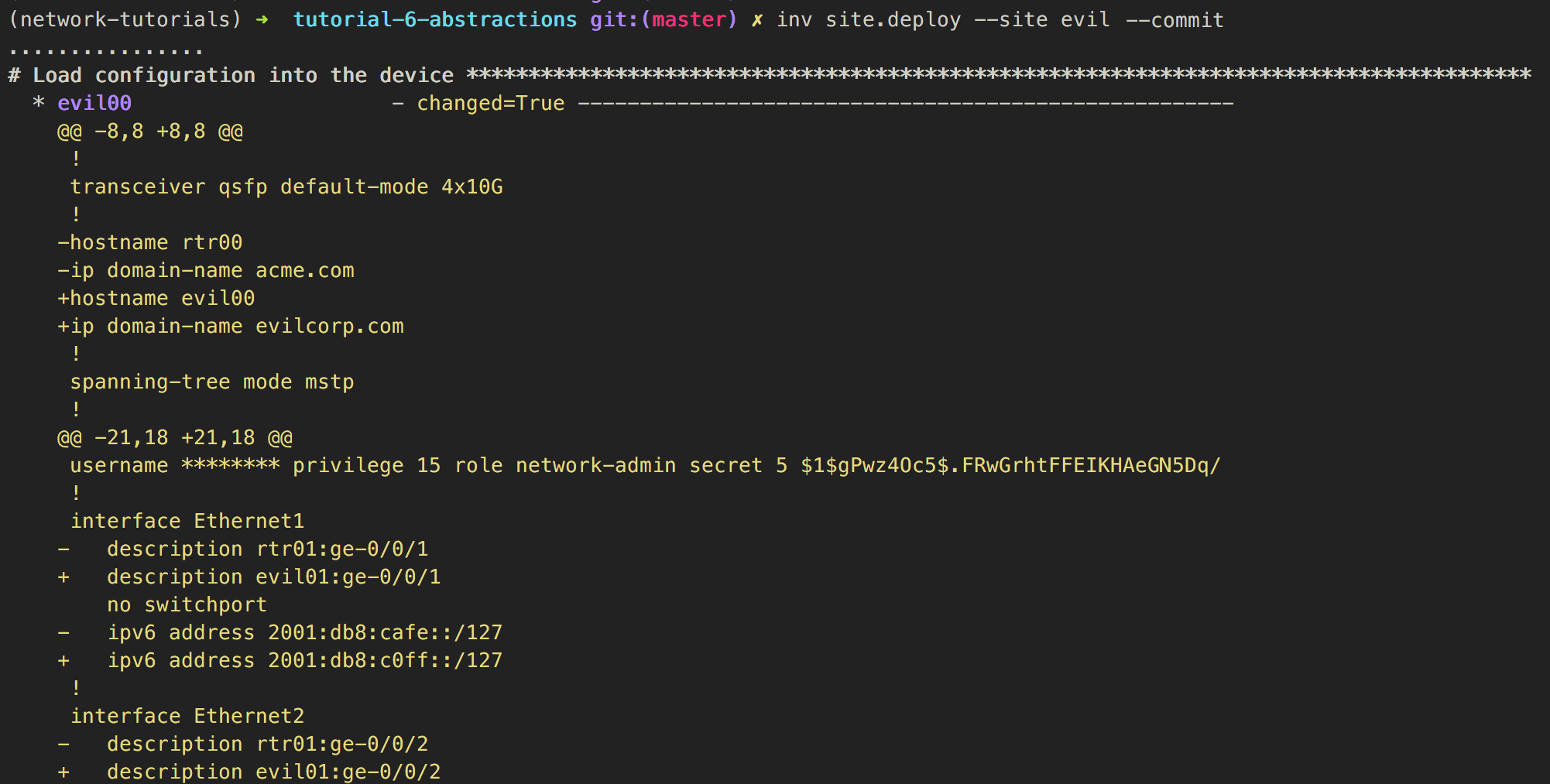

Abstractions - Deploy site - Evil (1)

Abstractions - Deploy site - Evil (2)

Abstractions - Deploy site - Evil (3)

Abstractions - Deploy site - Evil (4)

Abstractions - Deploy site - Evil (5)

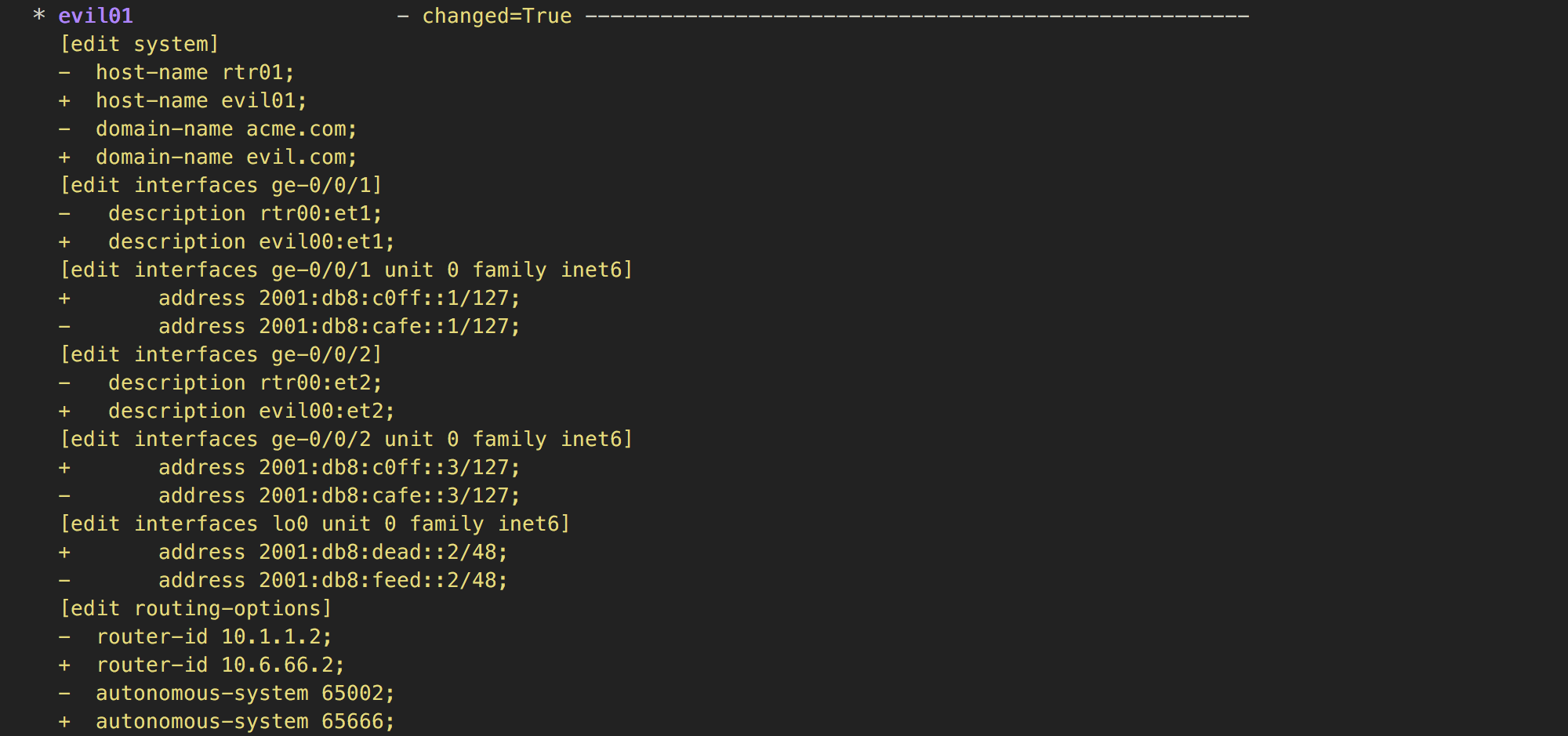

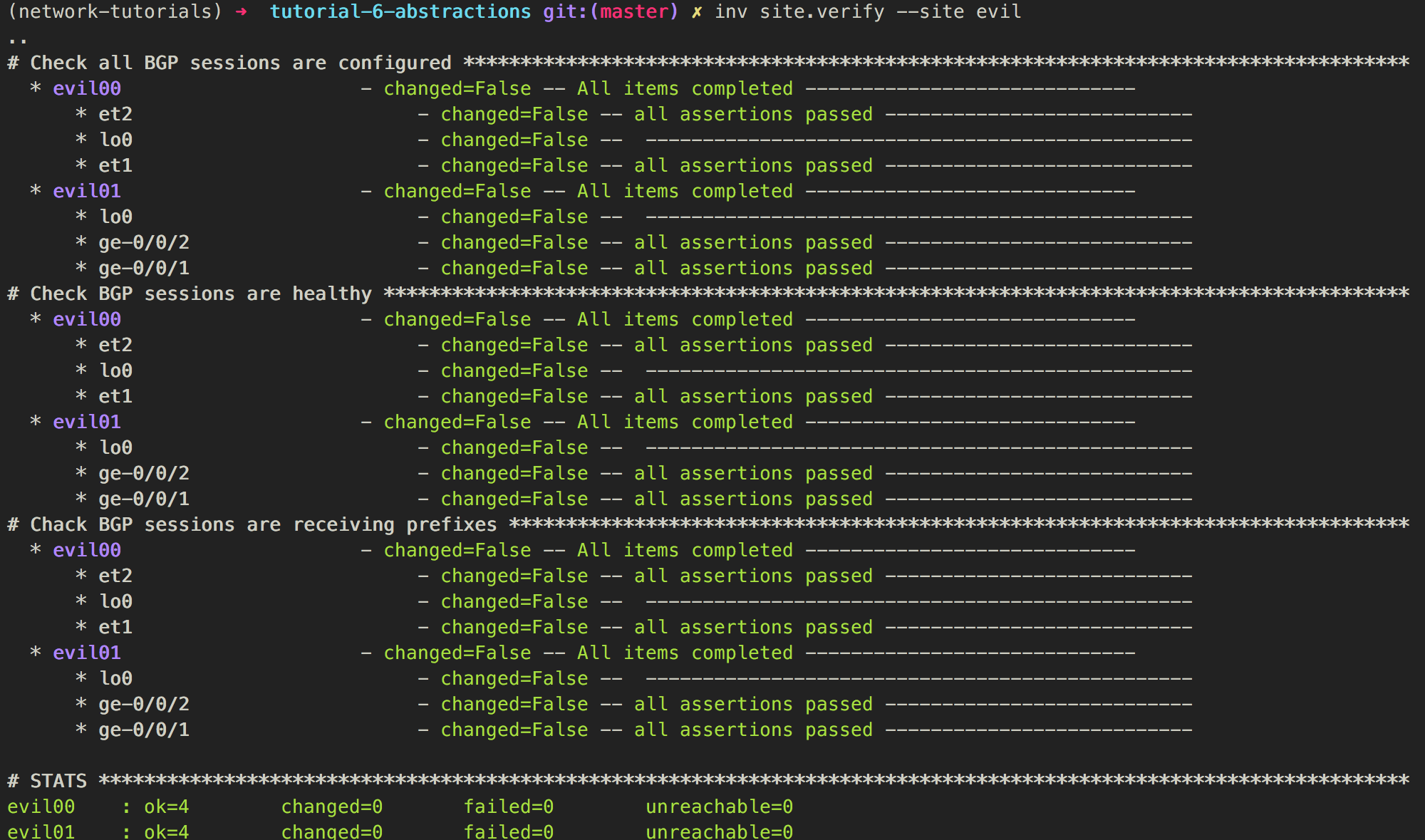

Abstractions - Verify site - Evil

Summary

- We built a tool that focused on services

- The tool would read simple defintion files that users can easily create/manipulate and do the necessary changes in the backend.

- As the tool is very simple and easy to use, it can be used by any type of user, not only by network engineers

- Tool could be a web form rather than a cli tool